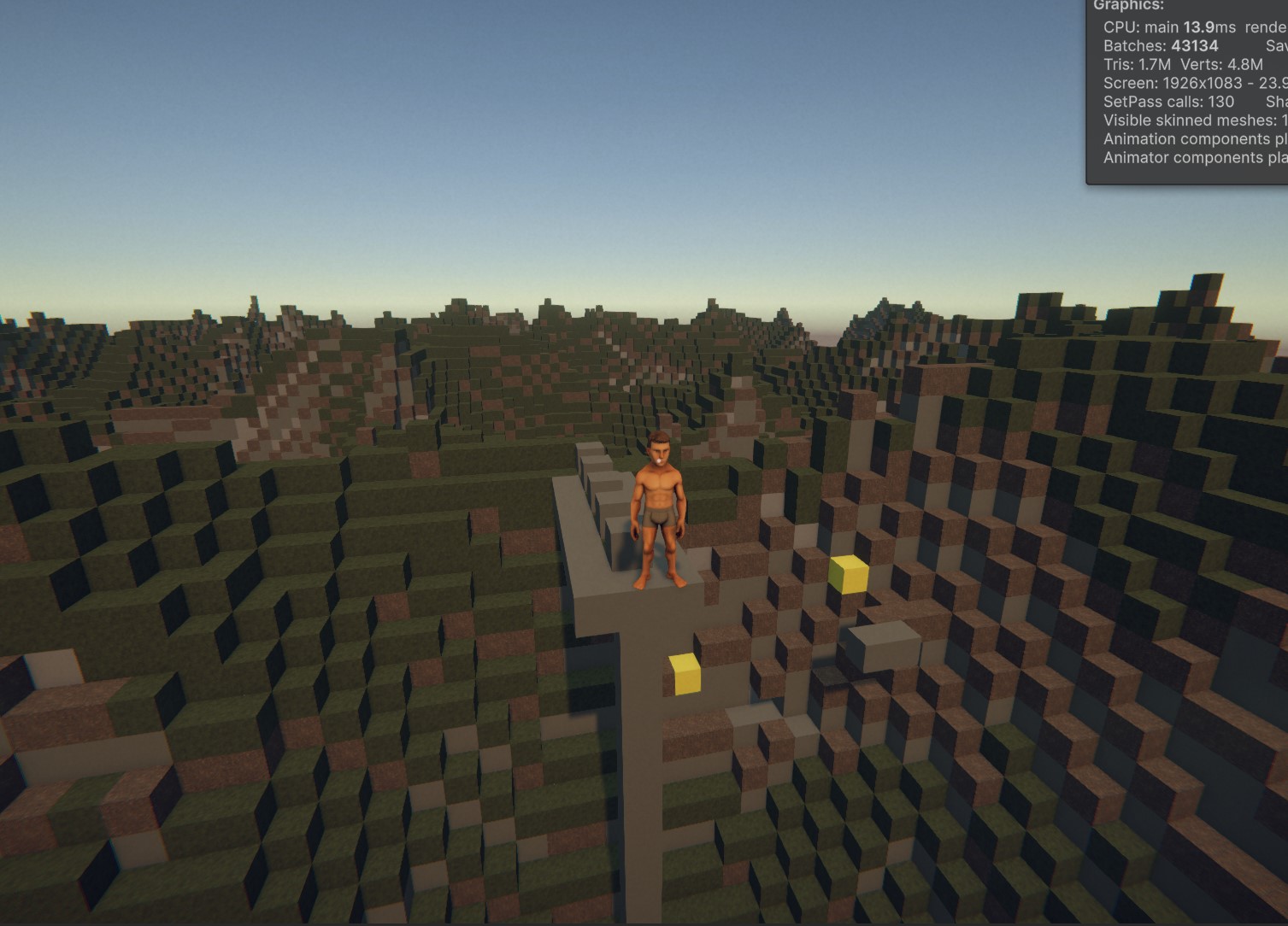

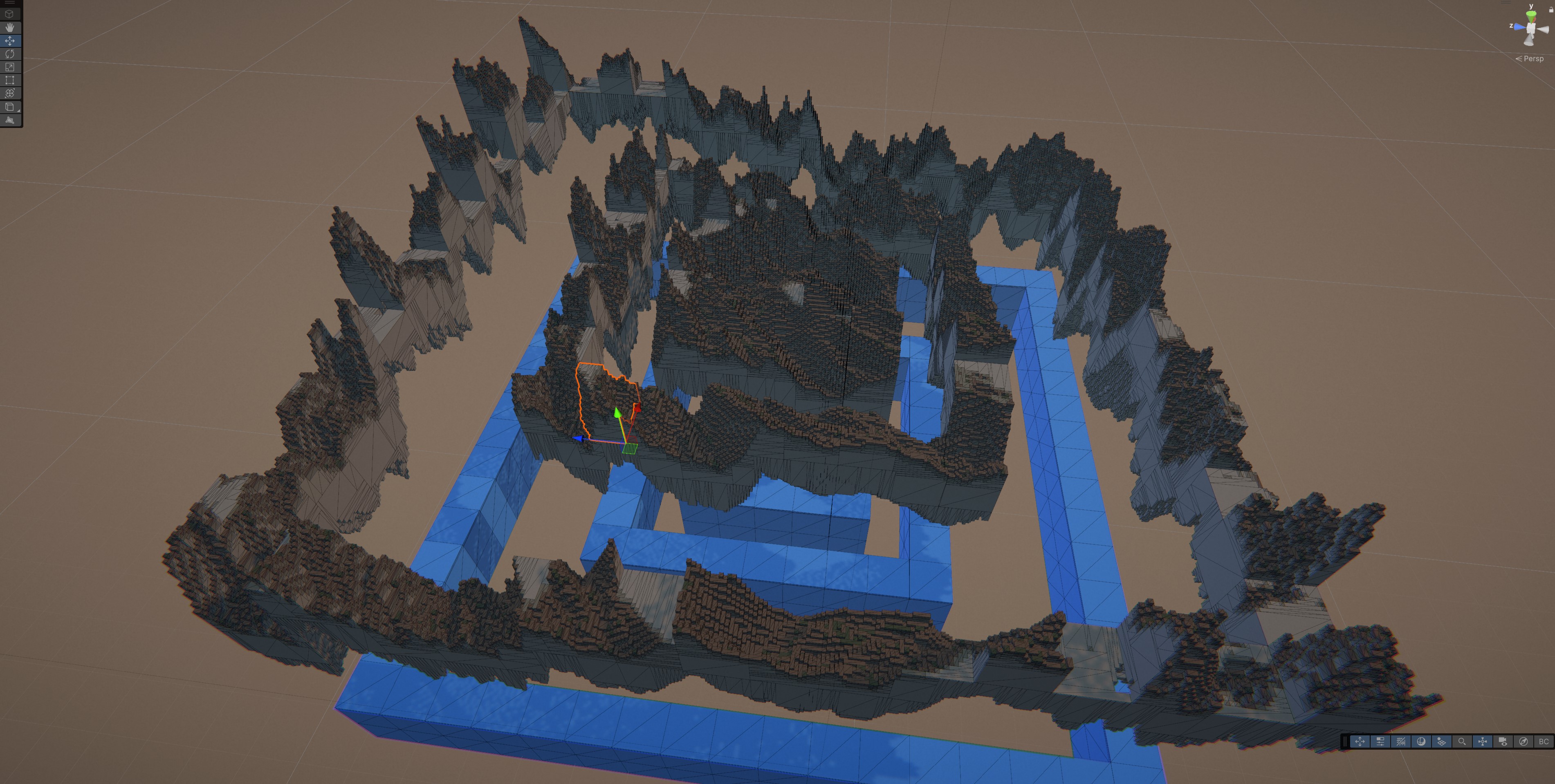

NULLSEED is a post-collapse voxel survival sandbox set generations after society fell due to misuse of advanced technology. Players live out of a camper van, build an off-grid base, and travel between small settlements to trade, take work, and gather supplies.

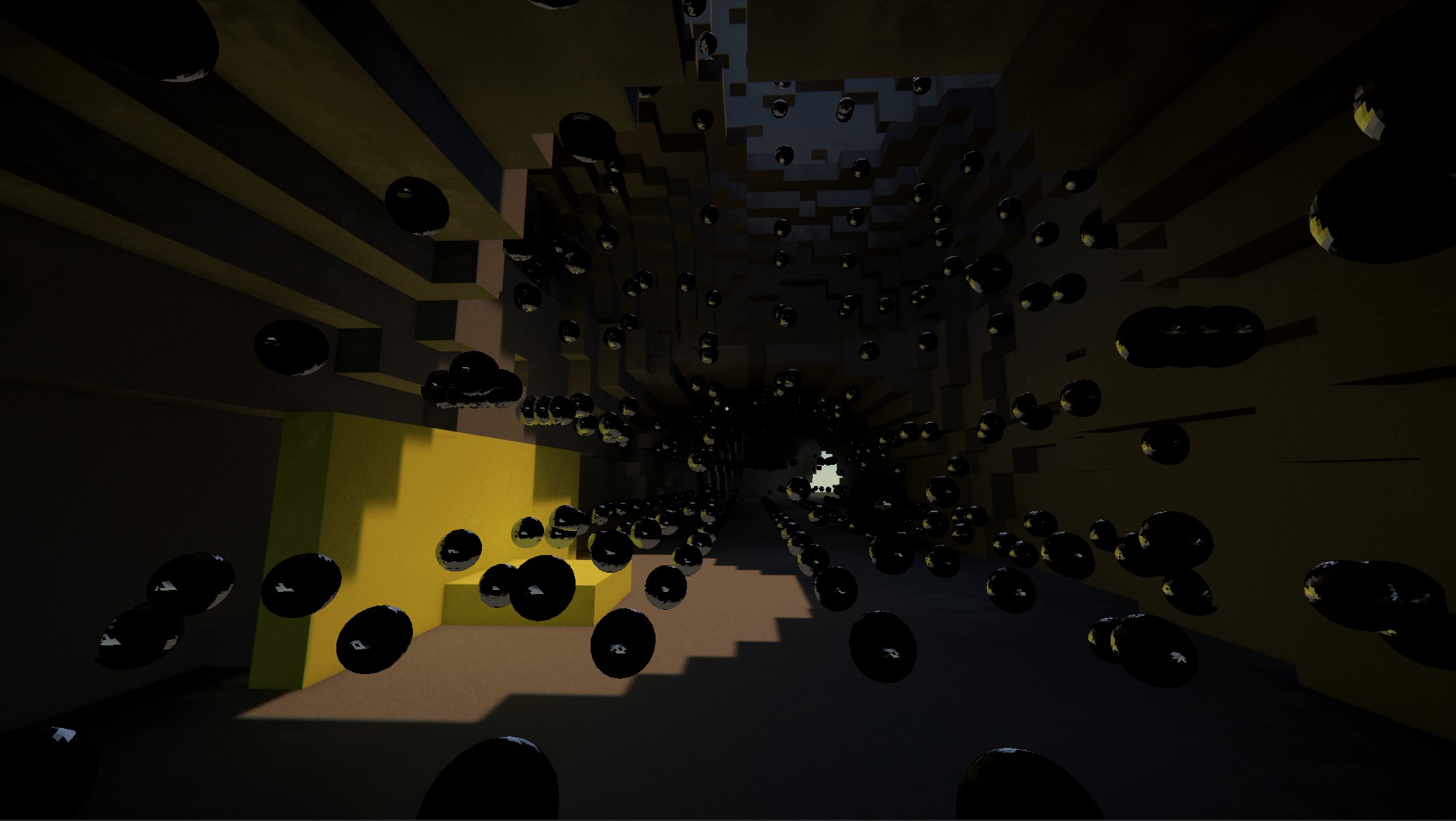

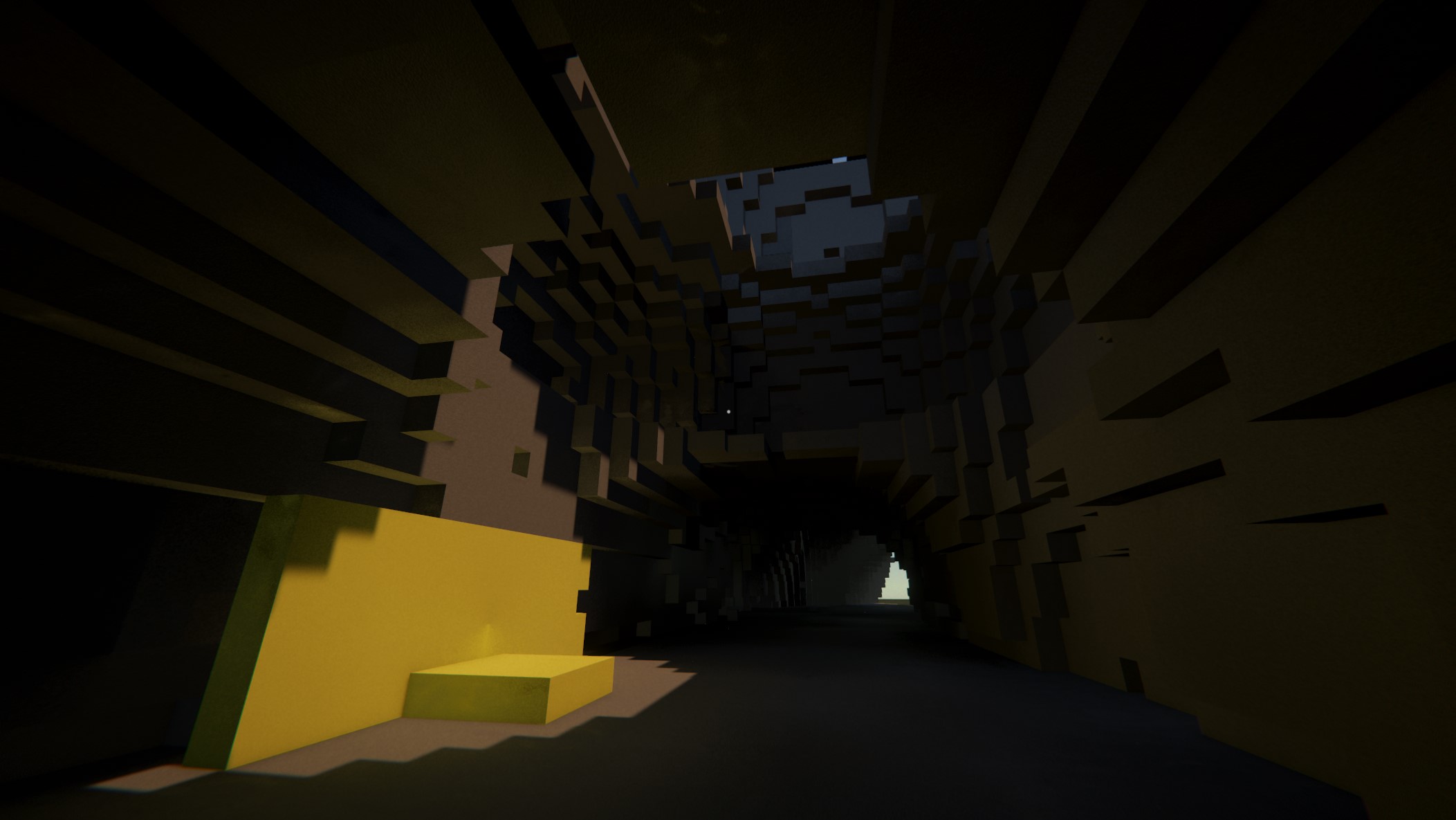

The most valuable loot and the most dangerous problems come from null-zones. These are localized pockets of contamination where matter and space behave inconsistently. Players enter null-zones for short, high-stakes salvage runs. They stabilize hazards, recover artifacts, and extract before conditions worsen.

The game supports solo play and co-op. It aims for fast drop-in sessions while keeping long-term persistence through base building, world changes, and relationships.

This simple page serves to document the development and progress as I go and run into interesting problems.

If you want to contribute, help with problems or ask questions, you can contact me on Discord @aze.music

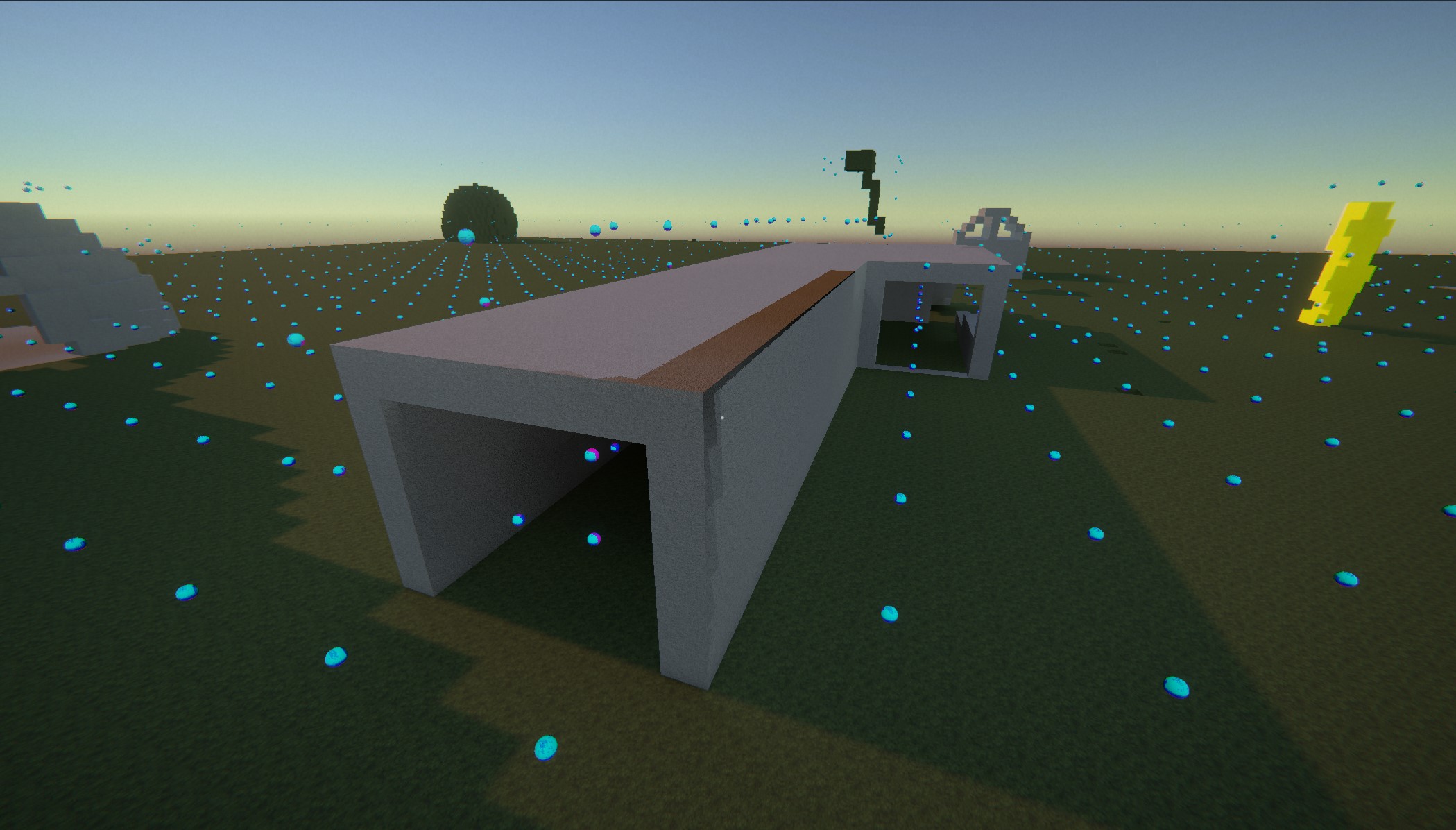

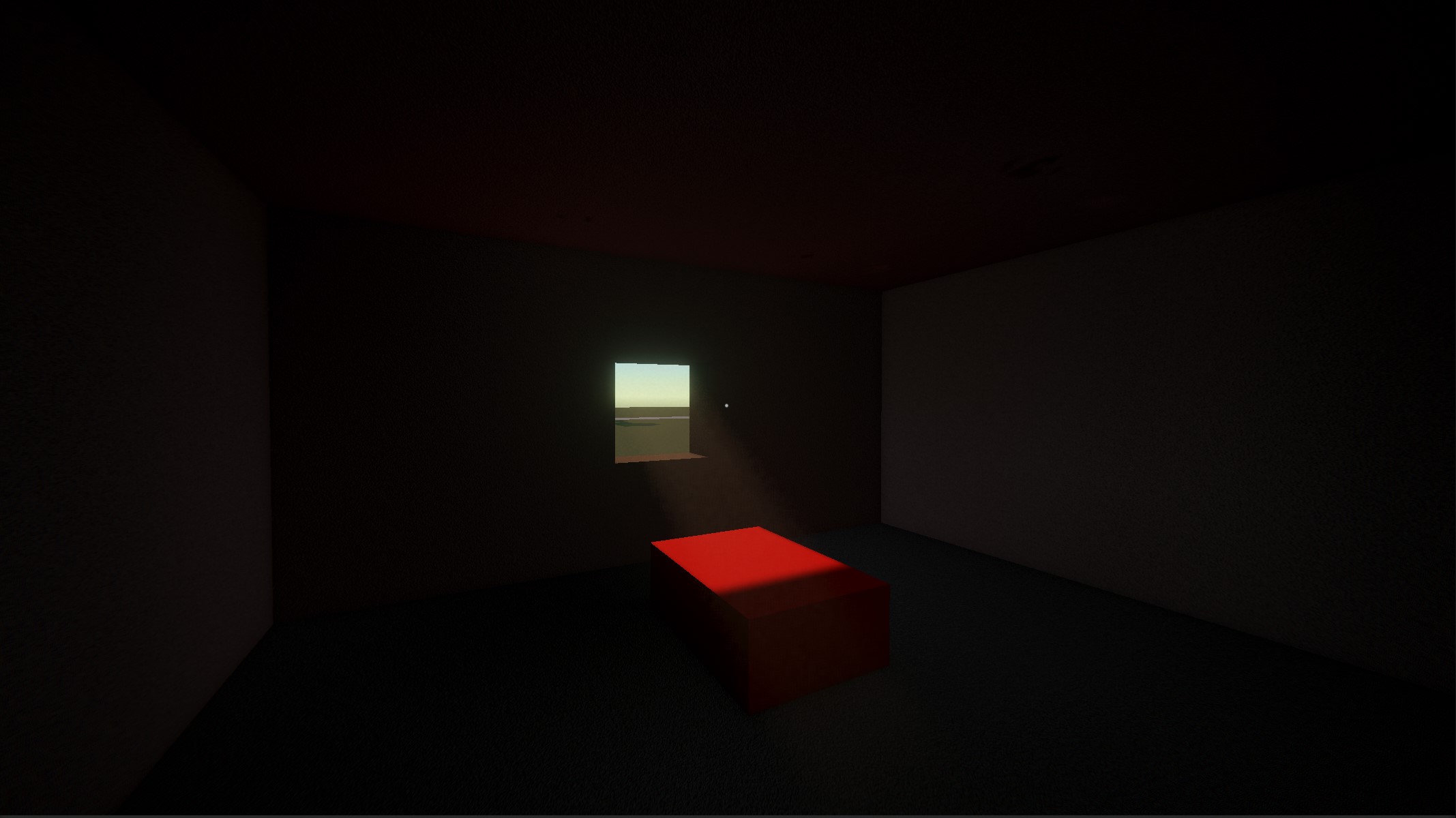

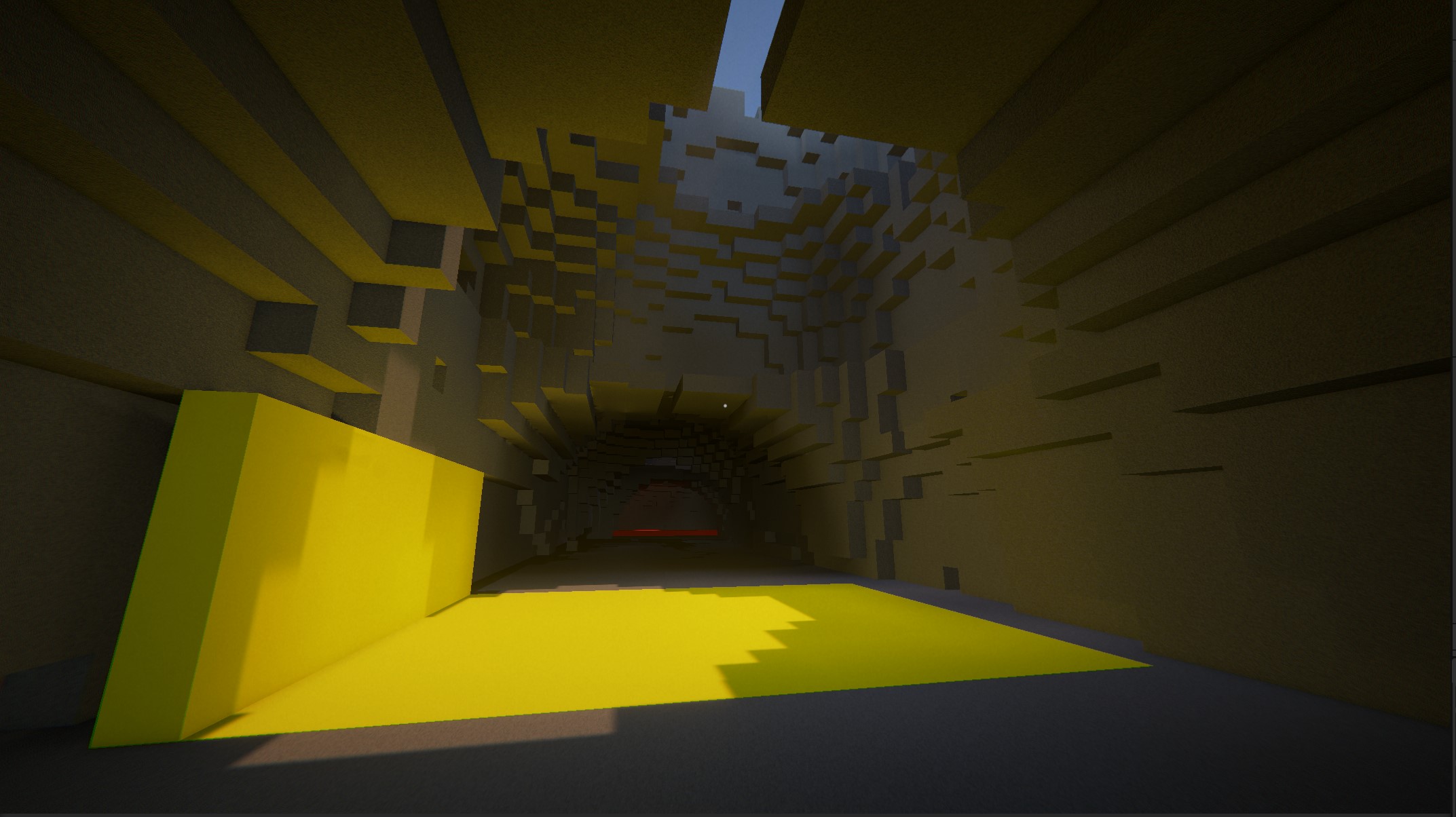

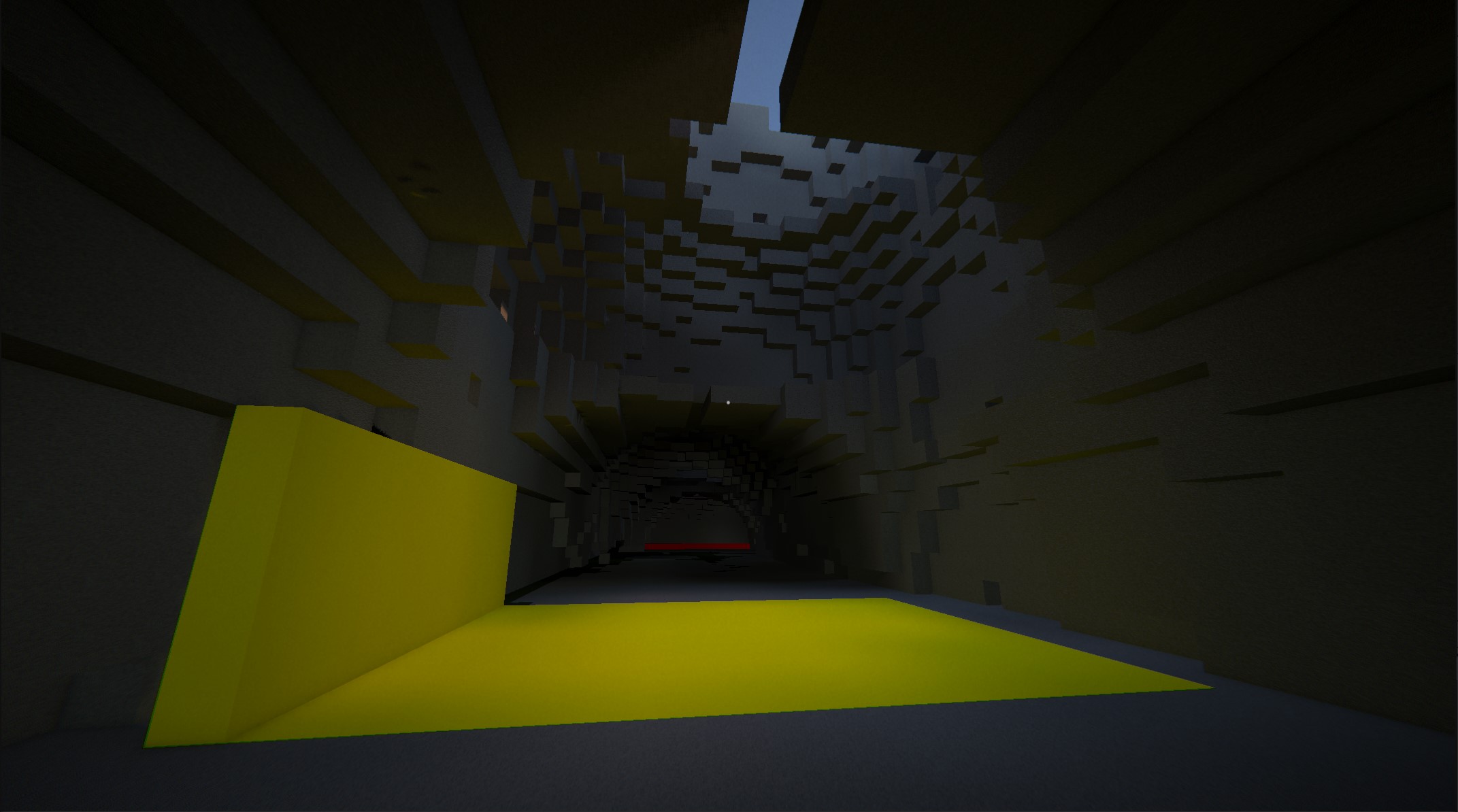

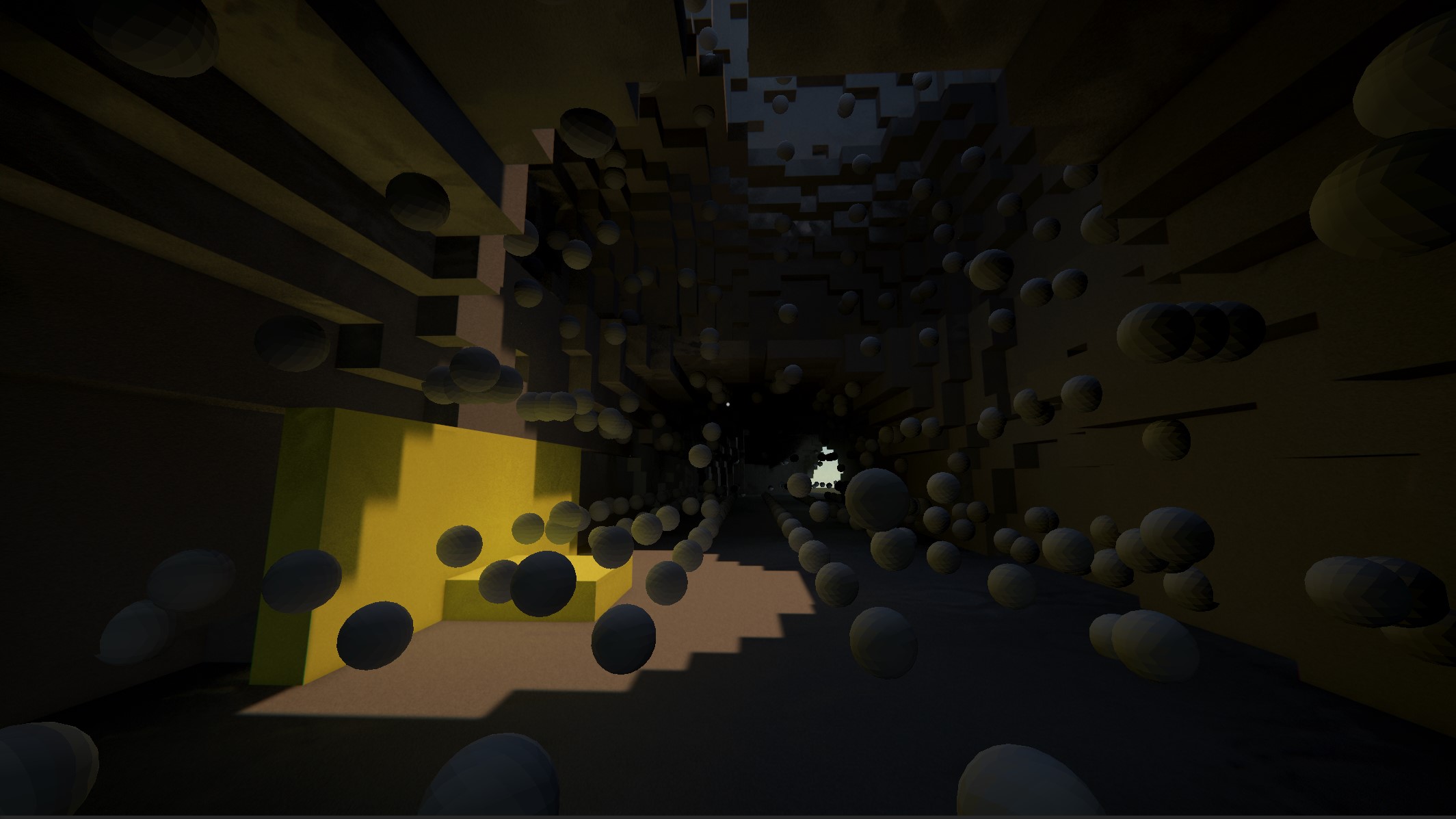

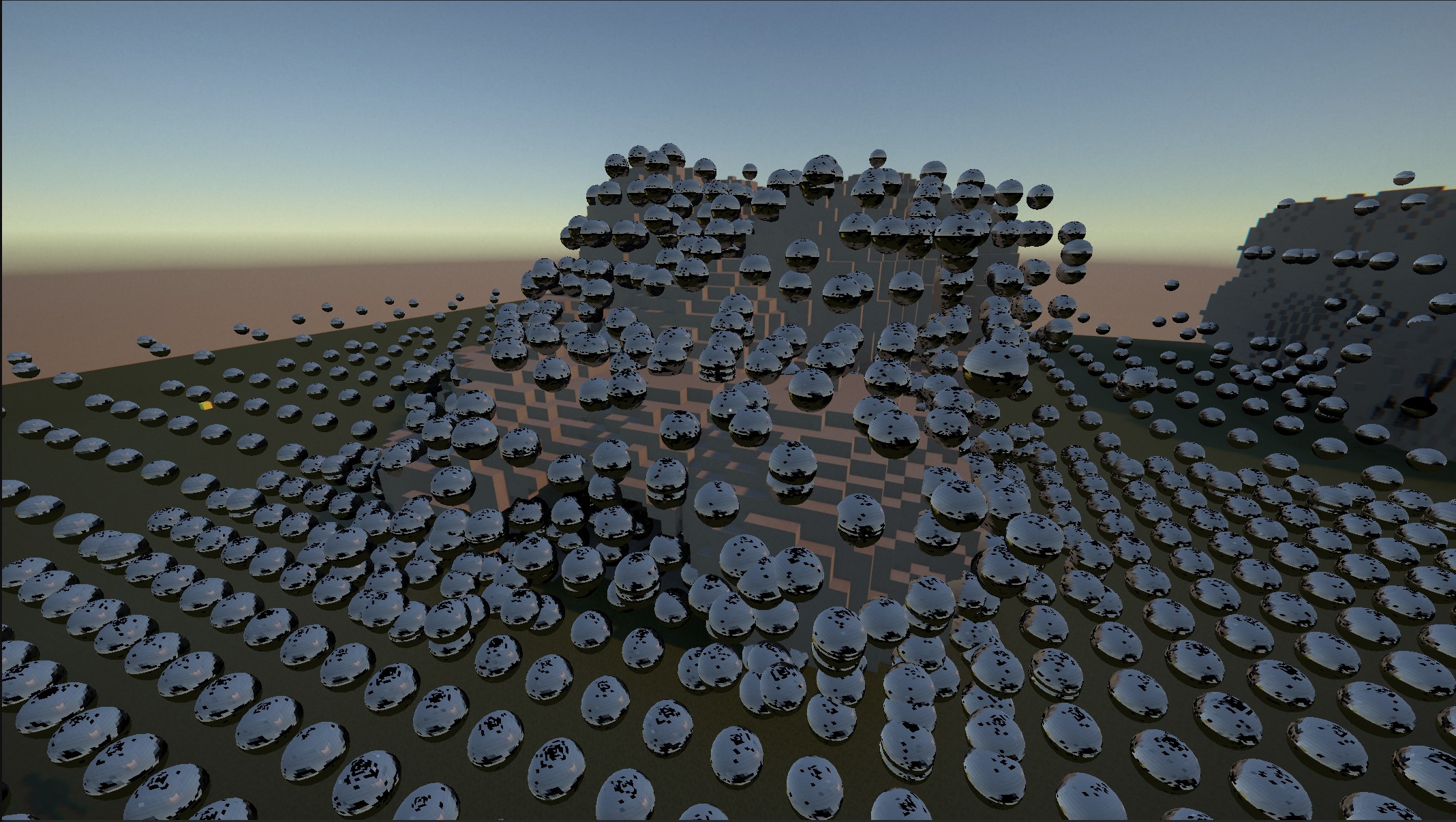

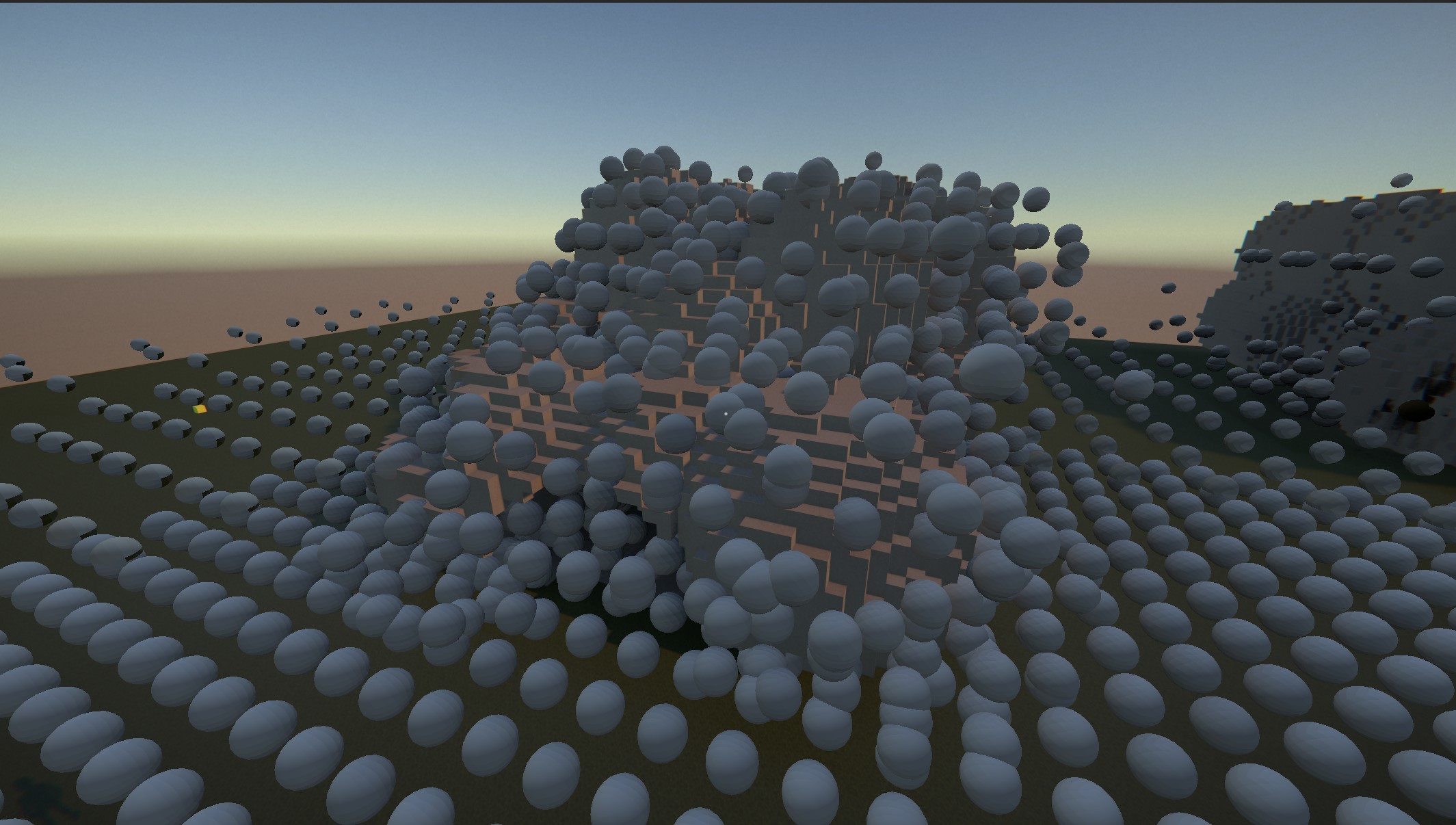

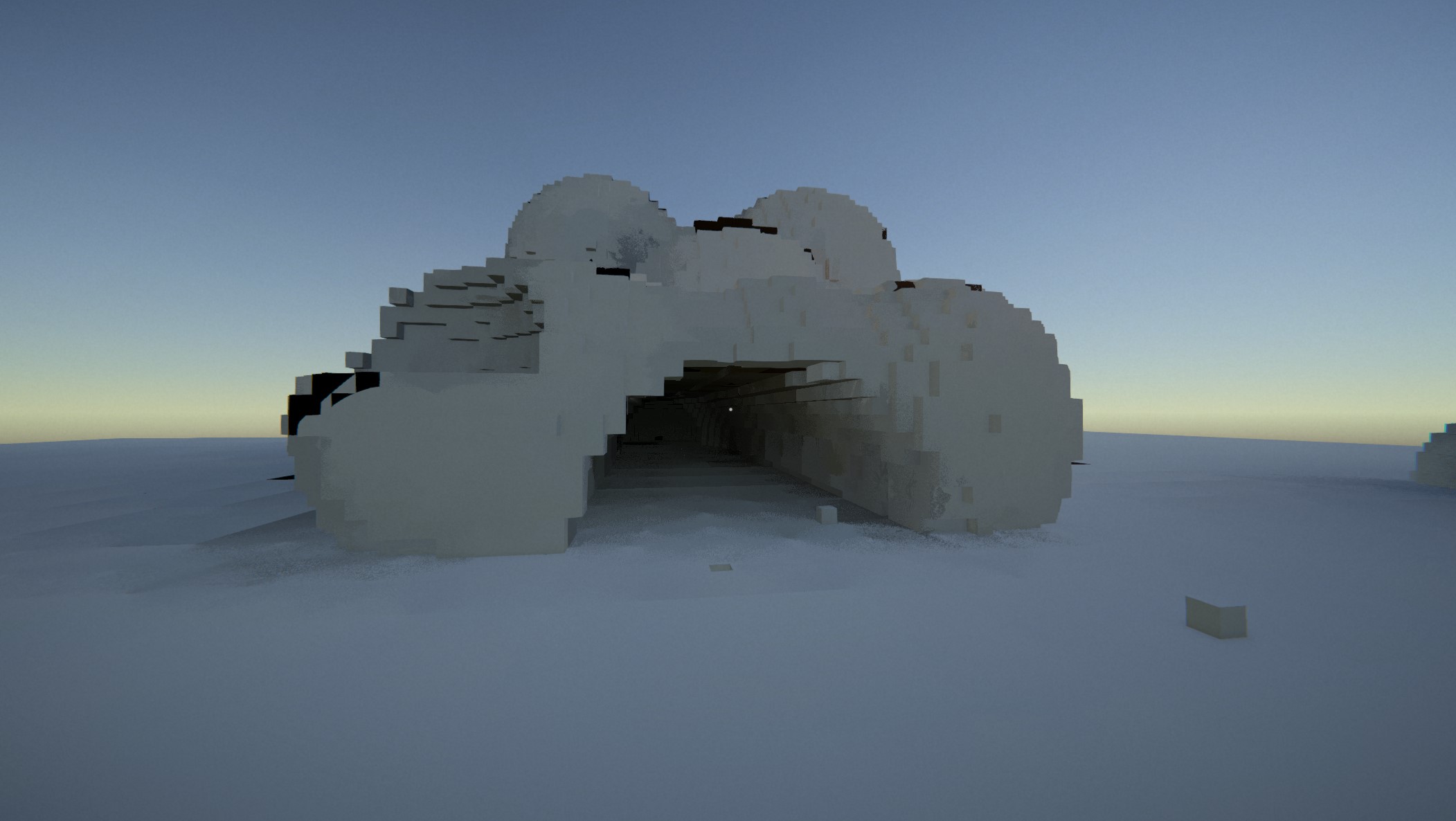

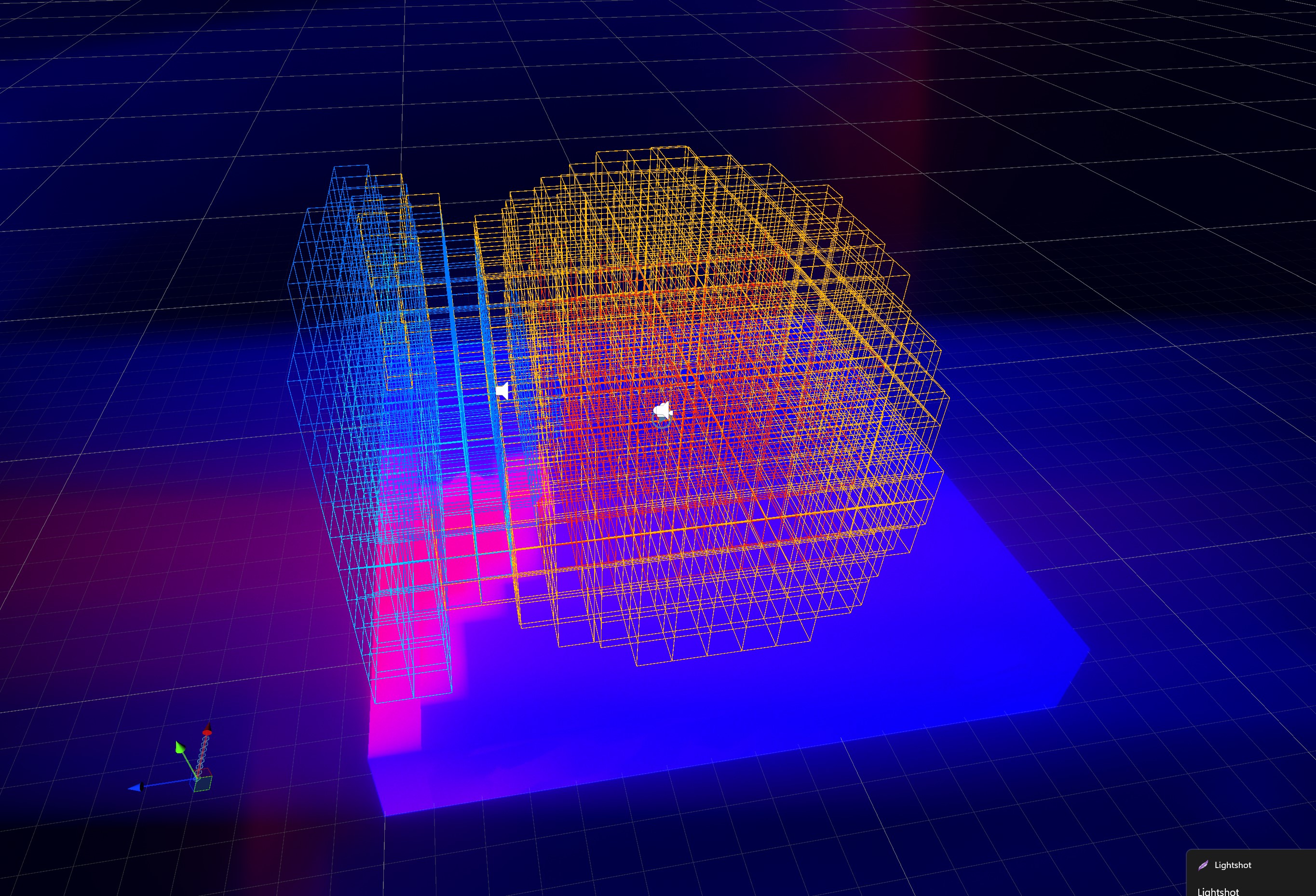

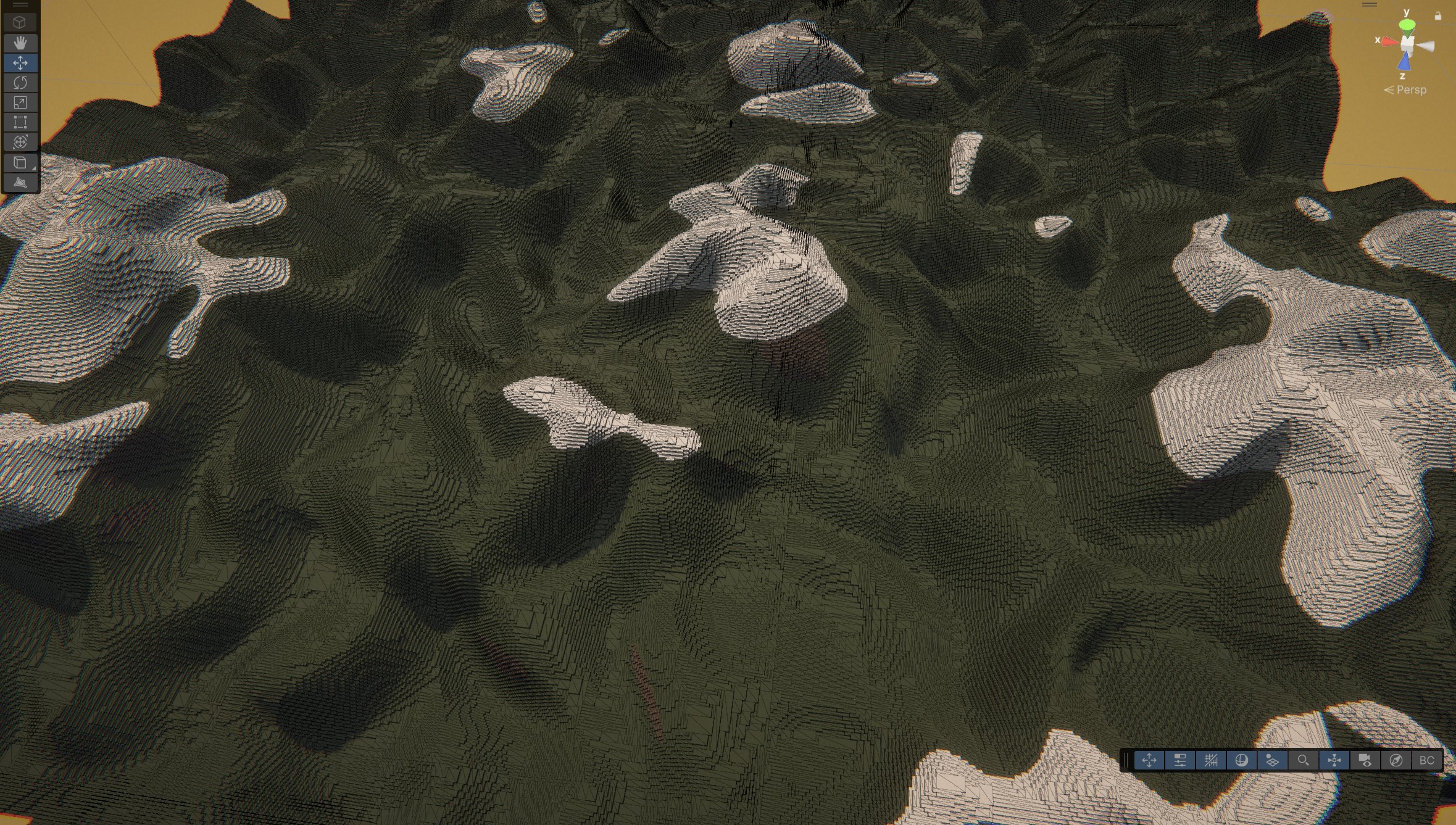

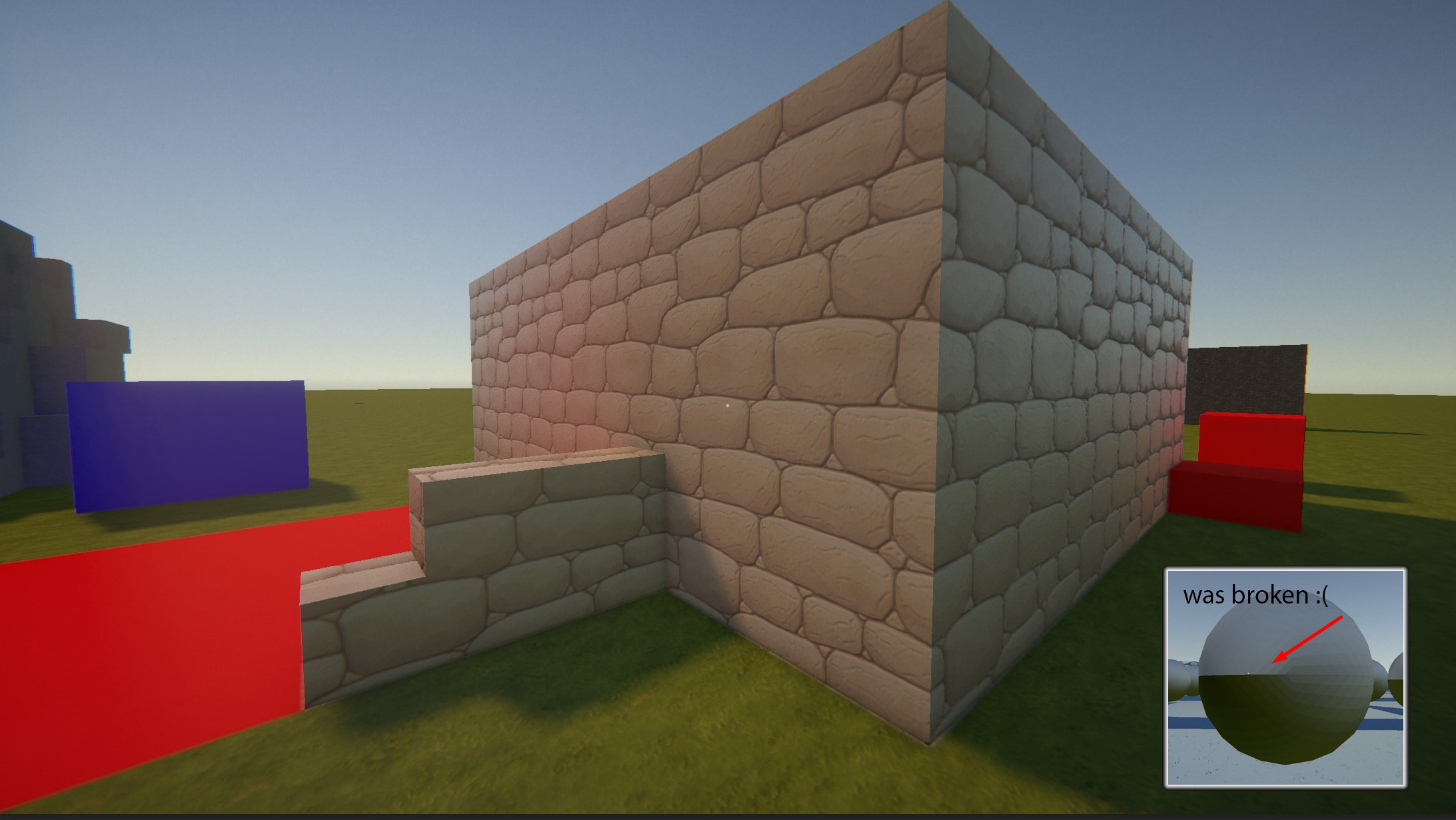

Big progress update - here's a demo of the current state of the global illumination engine. It still needs a lot of work, but I am happy with the progress. Video has reduced FPS, average FPS was between 90-120 with RTX3060

Surface probes sample:

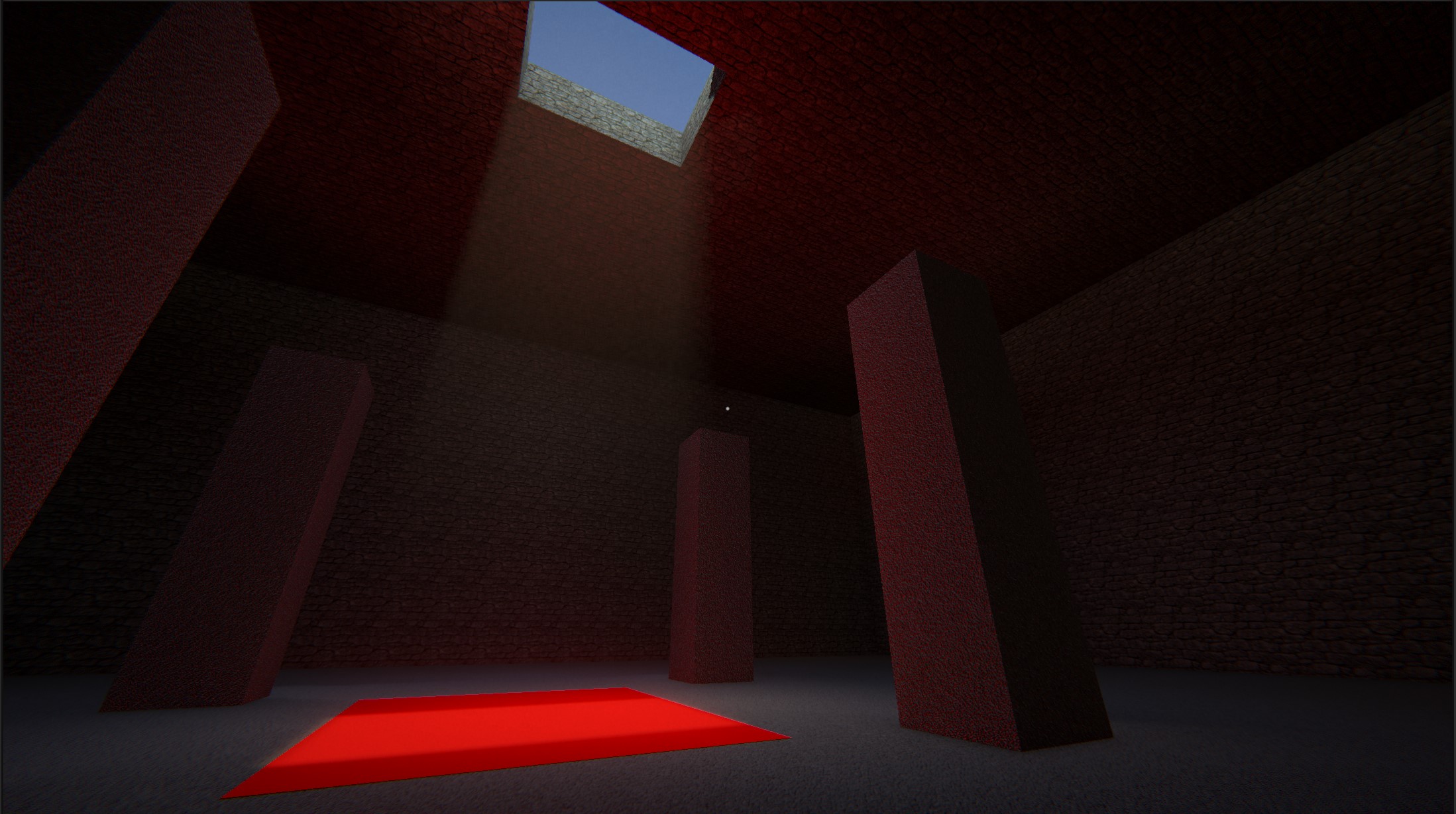

- Diffuse lighting - works in most scenes correctly now with 1-3 bounces depending on scene. Rendering of far domain does only 1 bounce + sun shadow, open exteriors do 1-2 bounces, interiors and caves do 2-3 bounces depending on total luminance

- 2 layers of specular (ray marched voxel space + SSR for nearby objects)

Volume probes accumulate:

- Segments of already marched rays intersecting probe's AABB box

- Used to shade volumetric fog by sampling a sparse 3D texture generated from volume probes in screen space

- All probes that fall out of view or get overlaid by a denser cascade are instantly frozen, still sampleable as a fallback cache, but generate no workload.

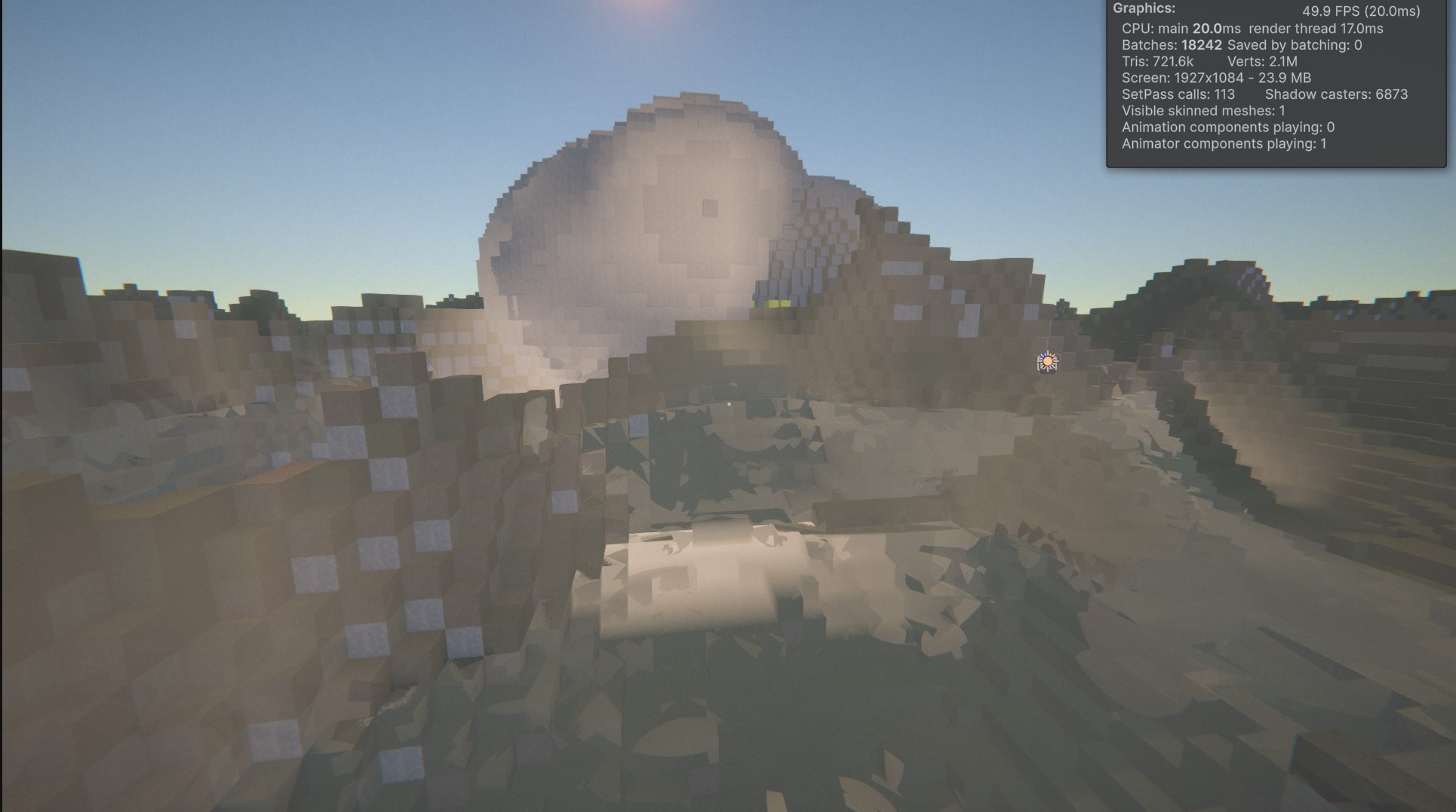

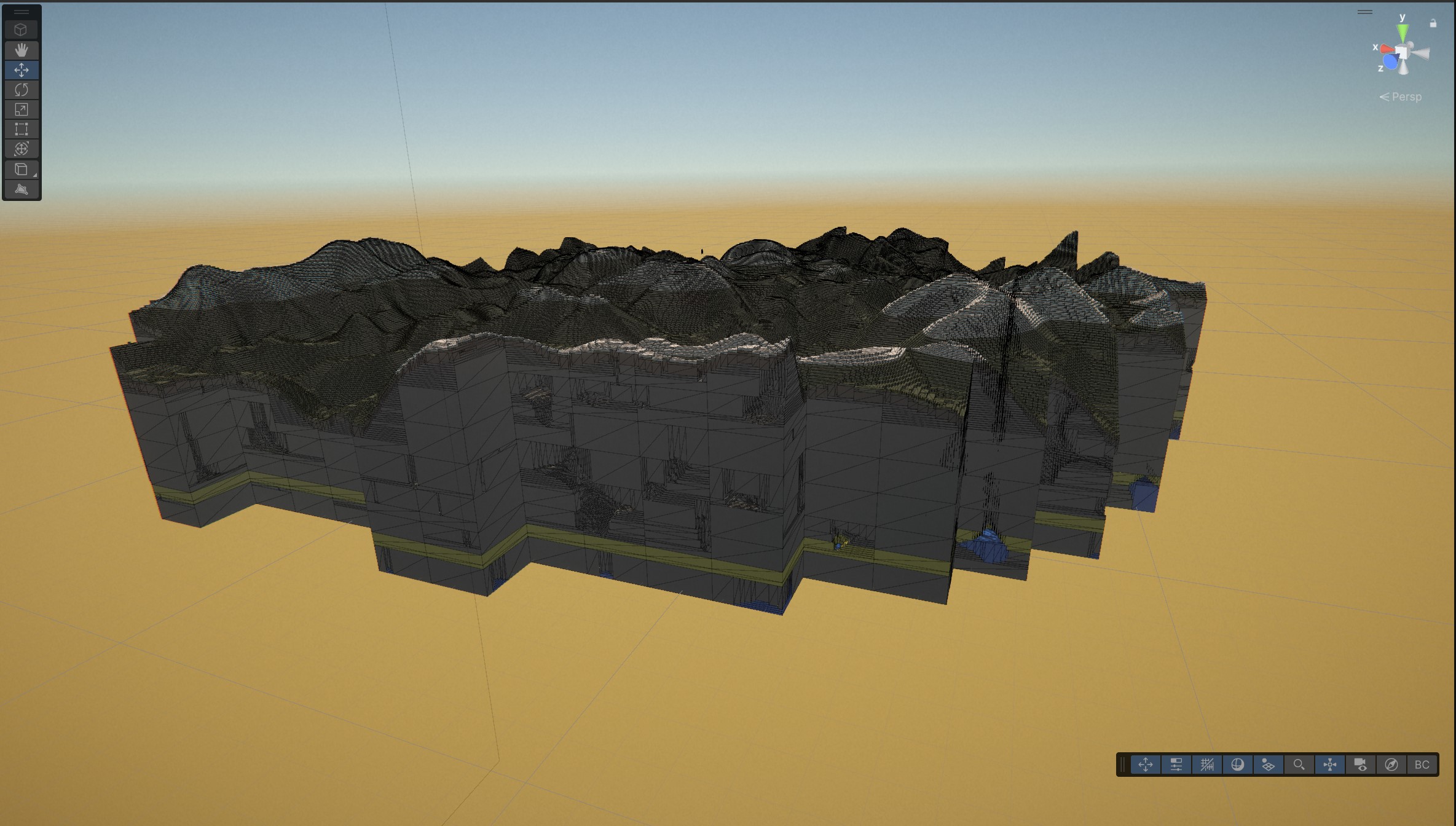

Also: Added far-domain rendering, extending view distance to 3km by converting world into a set of heightmaps for each LOD, with a mechanism to convert far voxel structures into low-poly proxy mesh. GI falls back to spherical harmonics after 3rd cascade and SDF sampler switches to 2D heightmap marching when a ray exits active voxel area. Heightmaps carry height in the lower 16 bits and material ID in the upper 16 bits.

A ton of optimizations in the voxel engine

... and as usual, also created a ton of other problems to solve one by one :) Biggest problem now is high hysteresis and low probe reactivity to changes. Also volume probes are very dependent on view and tend to be thrown off by stray bright rays hitting the sun lobe or strong emissives.

Next target is further stabilization, fixing light leaks and improving the volume probe cache concept. When the system gets into a decent shape, I still need to introduce light maps to support point light sources.

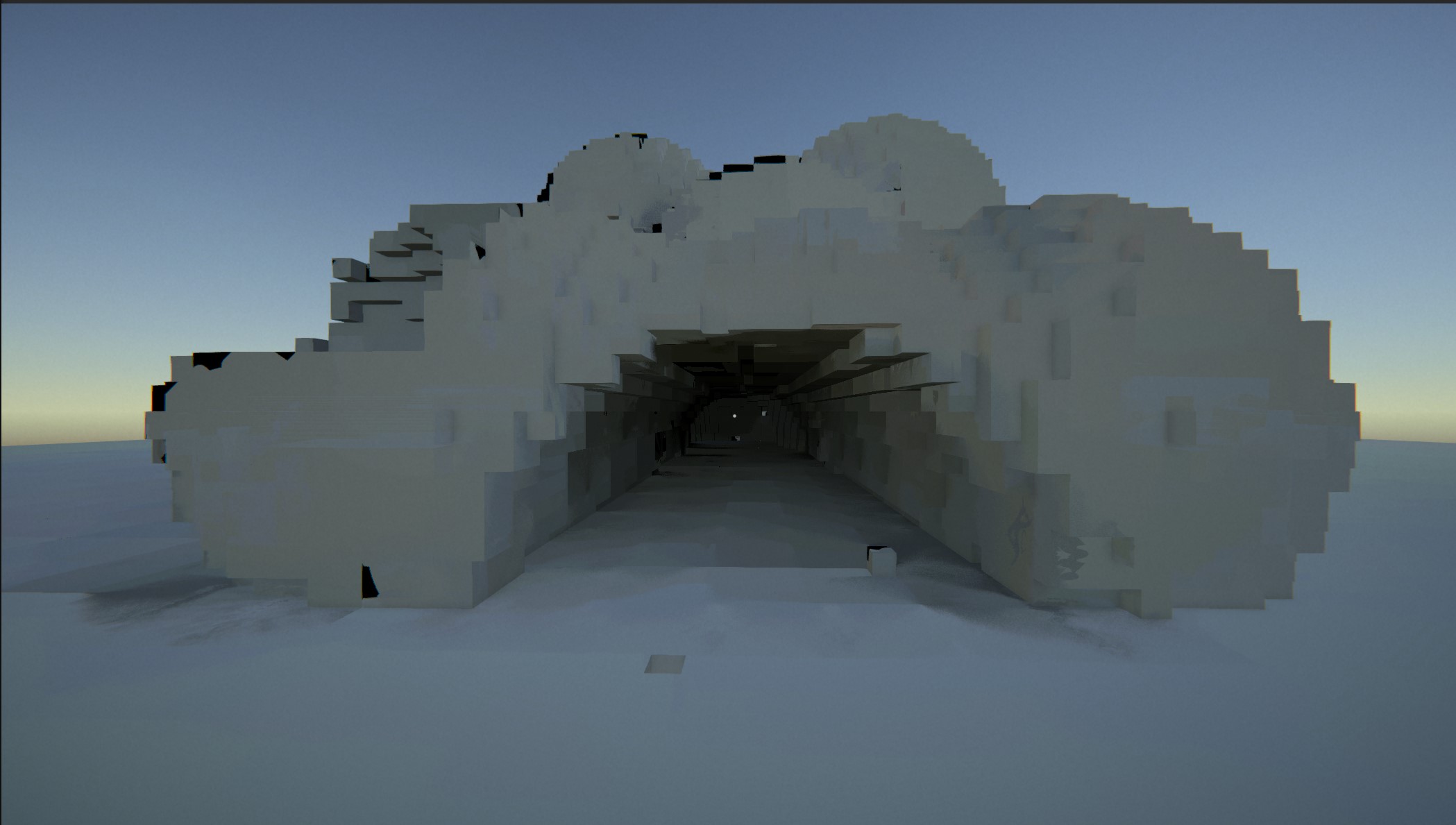

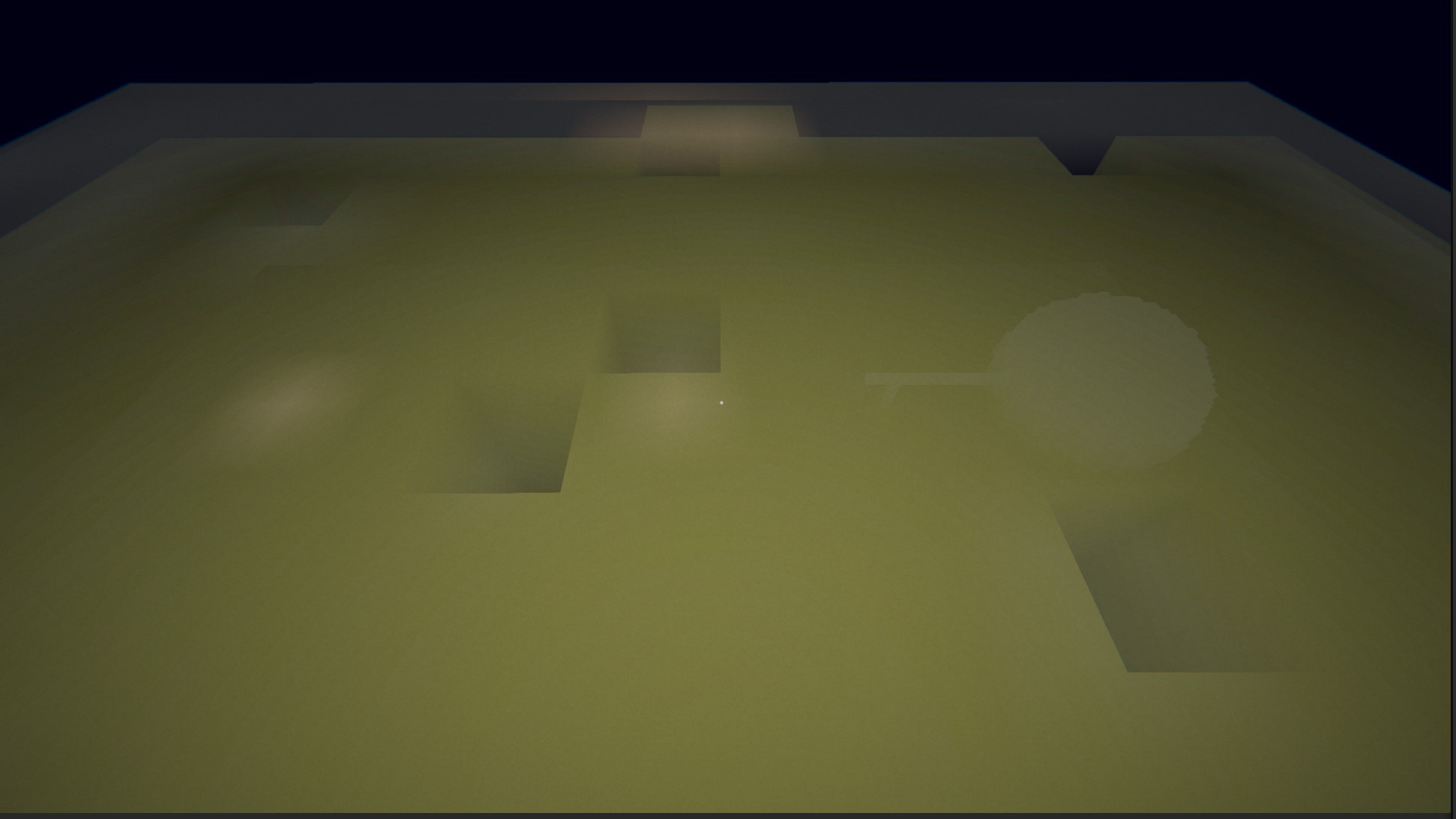

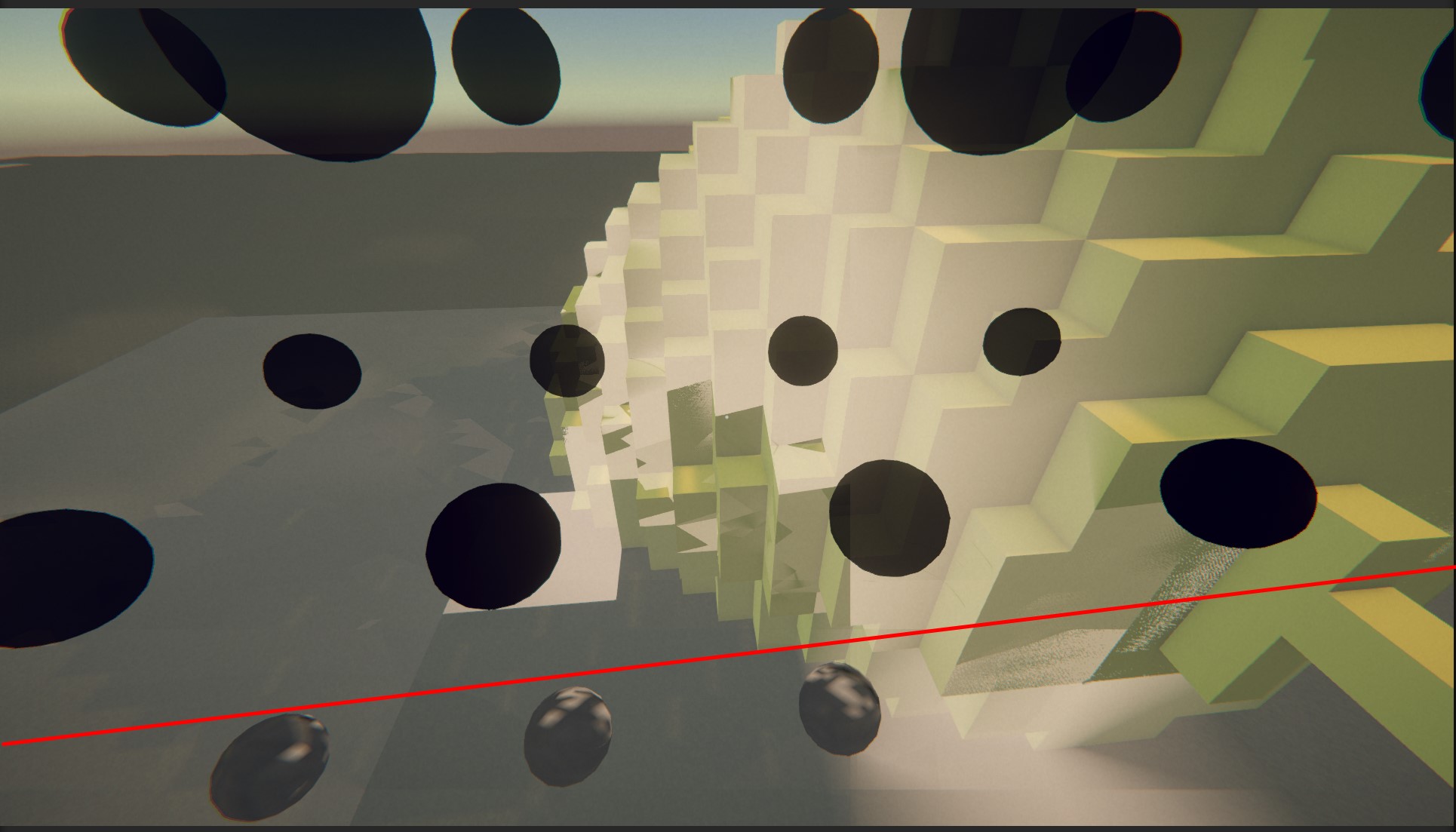

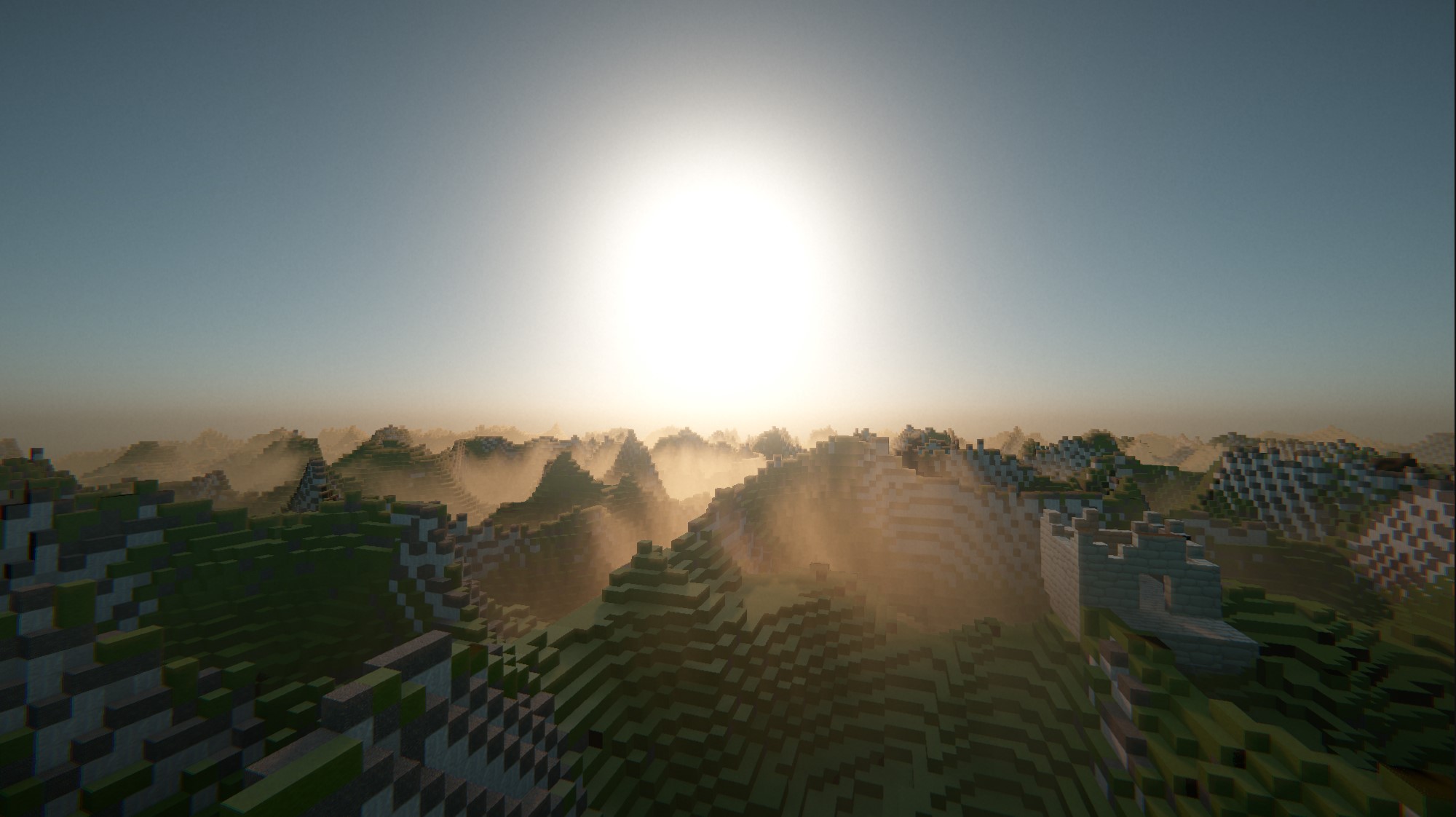

Added atmospheric scatter simulation for skybox, haze and fog. This is still work in progress, the goal is to simulate atmosphere with pollutants, water droplets and dust.

This implementation uses a combination of Rayleigh/Mie scattering equations, optimized with small optical depth LUT. Rayleigh scattering approximates how different wavelengths scatter in the atmosphere, creating sky color, Mie scattering simulates light scattering in a volume of particles in a forward direction, creating haze.

There are three layers of fog:

- light scattering haze (softens distant edges)

- height based depth fog with animated wind texture (creates a sense of volume)

- distant band of fog that blends to skybox color (fades geometry to avoid hard edges)

I'll be adding SDF ray marching to add more volumetric behavior. I'm trying to avoid froxels for performance reasons.

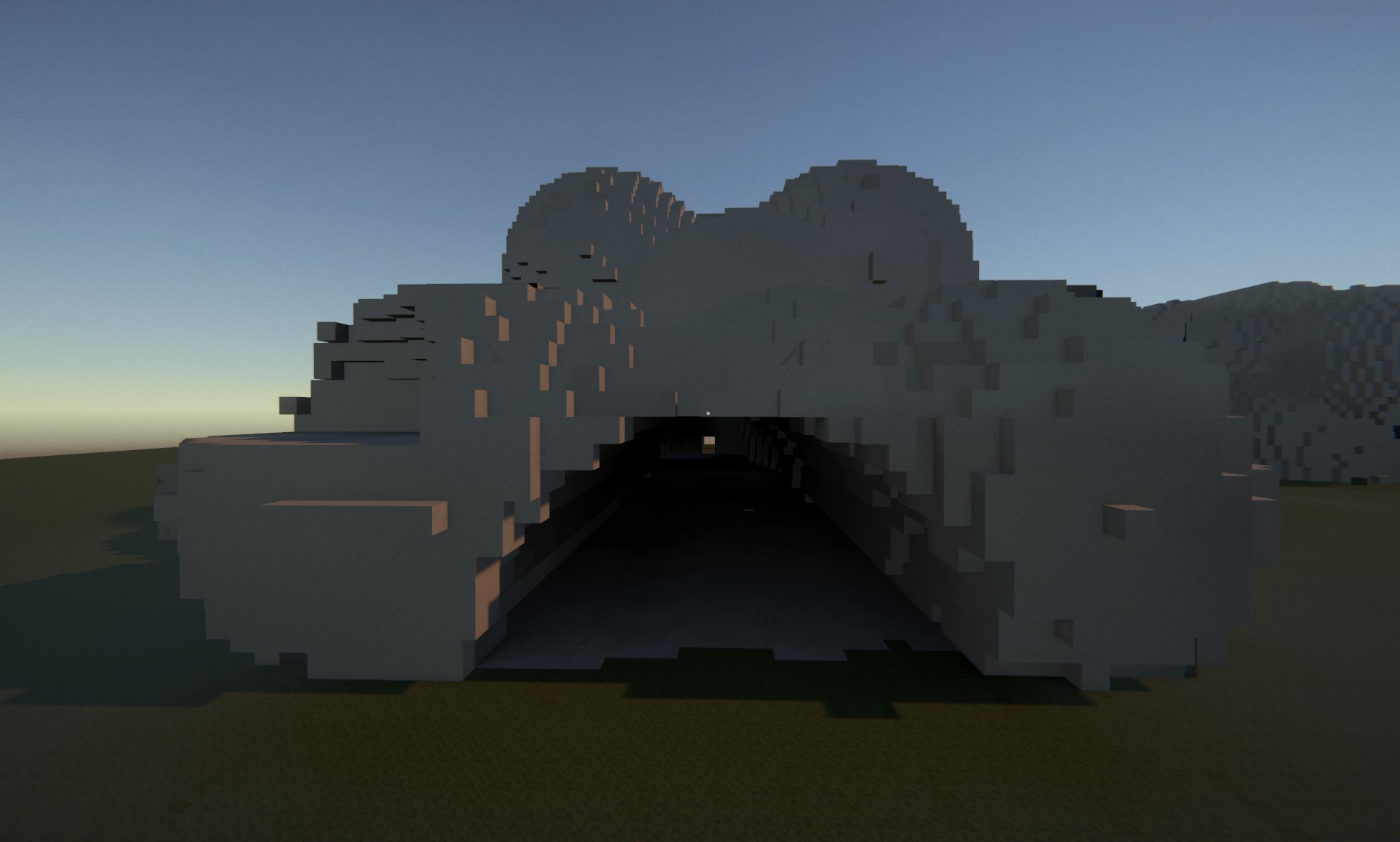

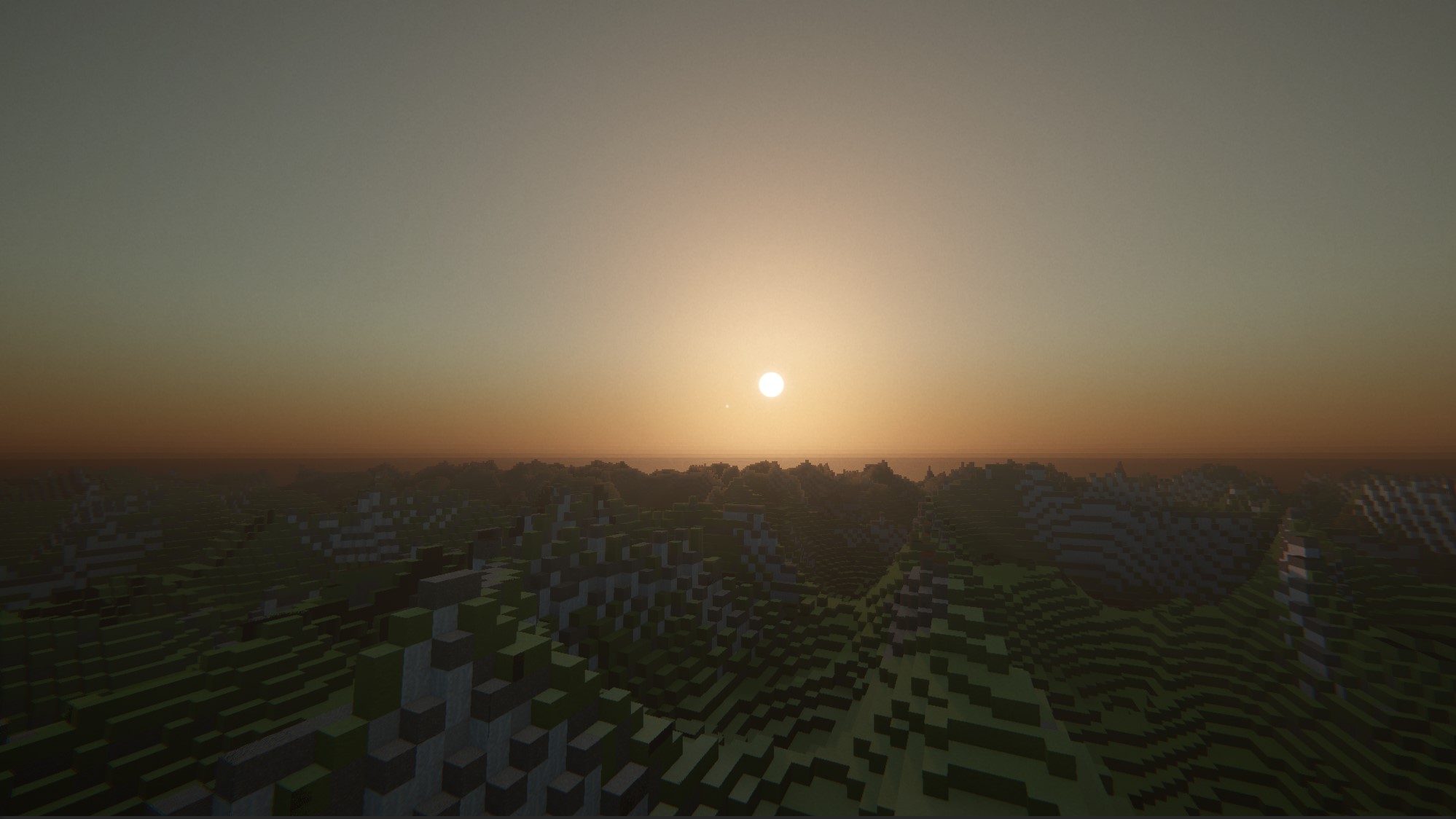

High particle anisotropy, low Mie coefficient -> colourful sunset

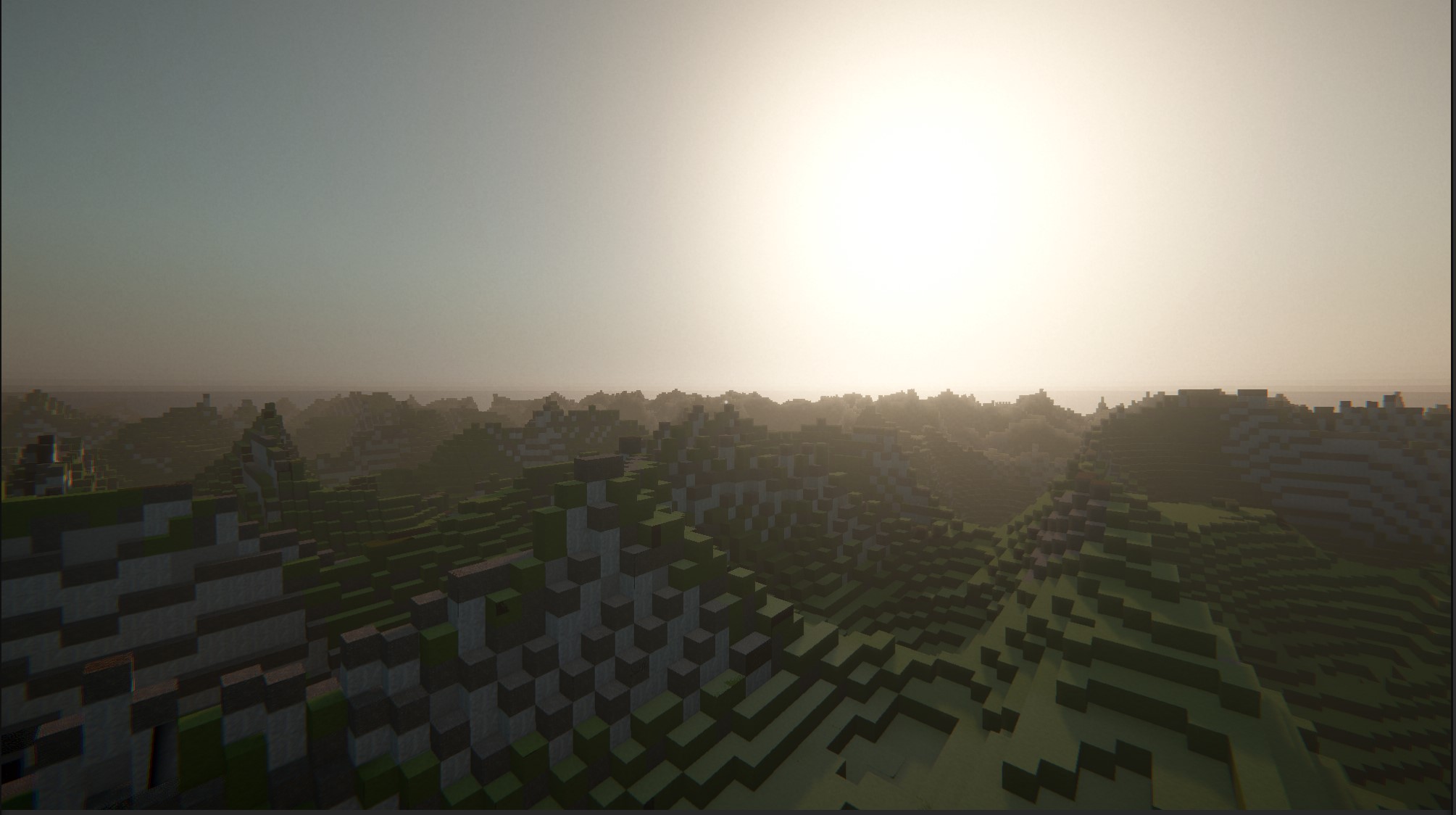

Increased humidity -> higher haze (increased Mie coefficient, low particle anisotropy)

The ultimate intent is smooth blending for different day times, weather, nearby anomalies and biomes.

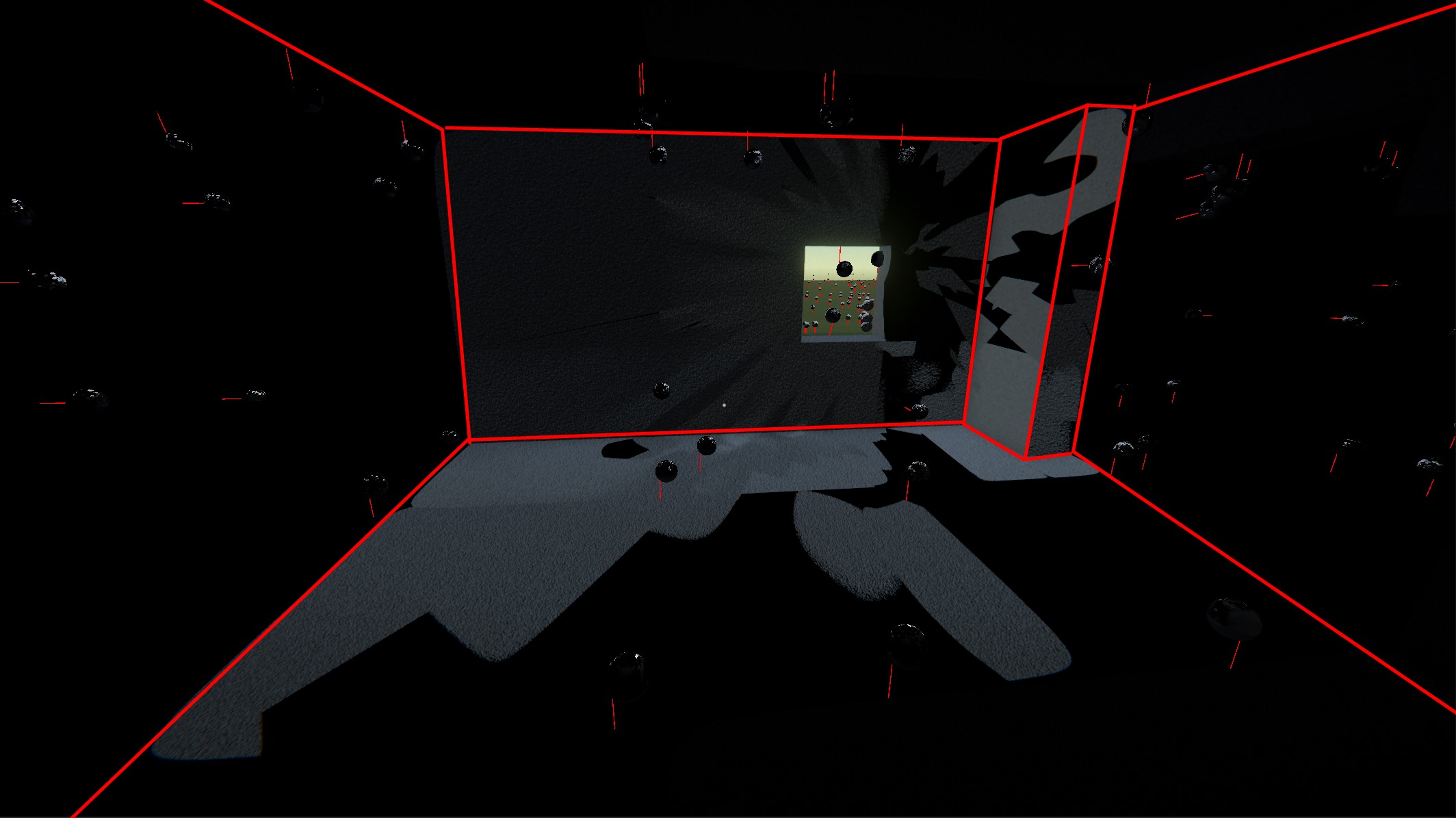

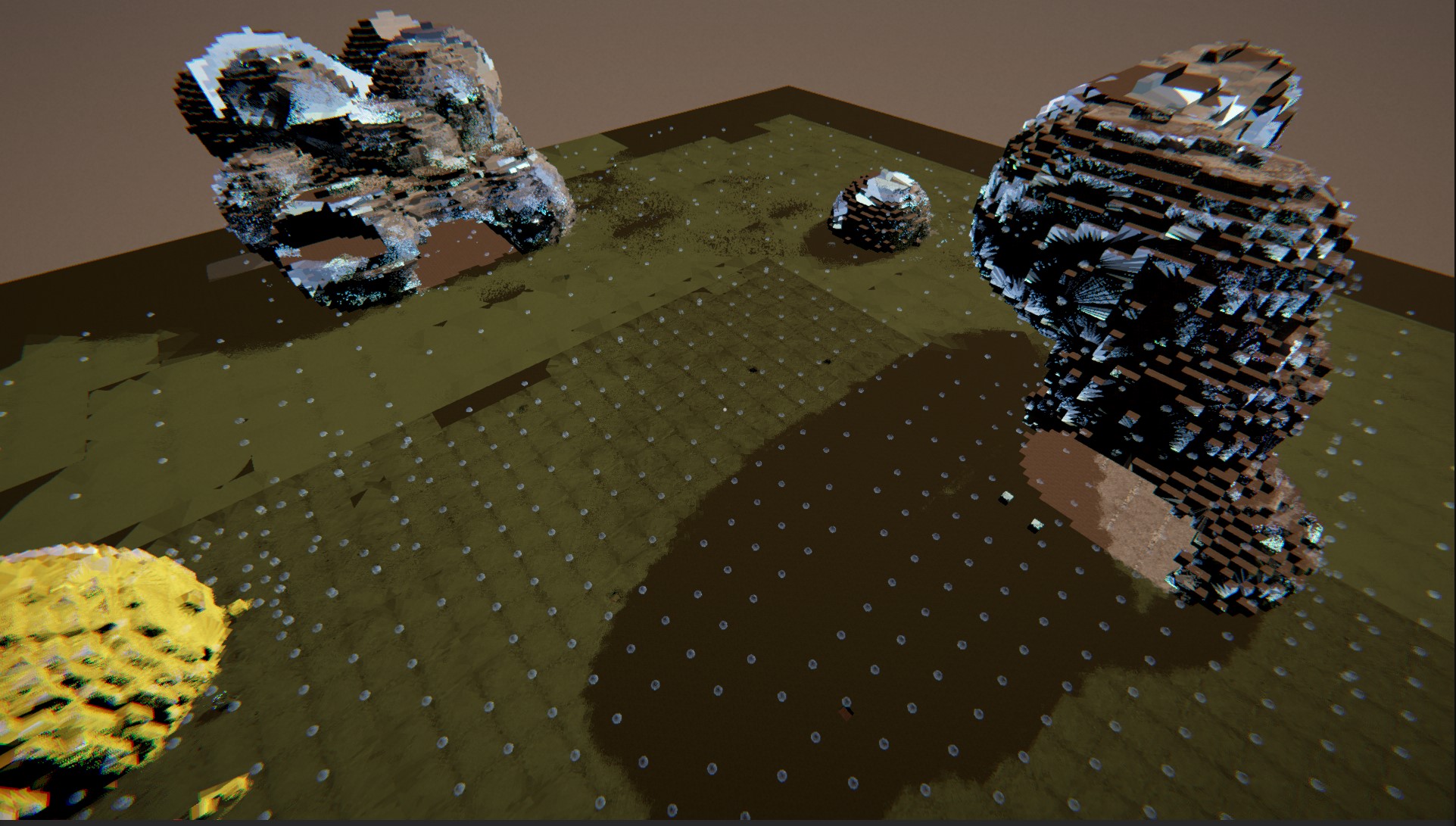

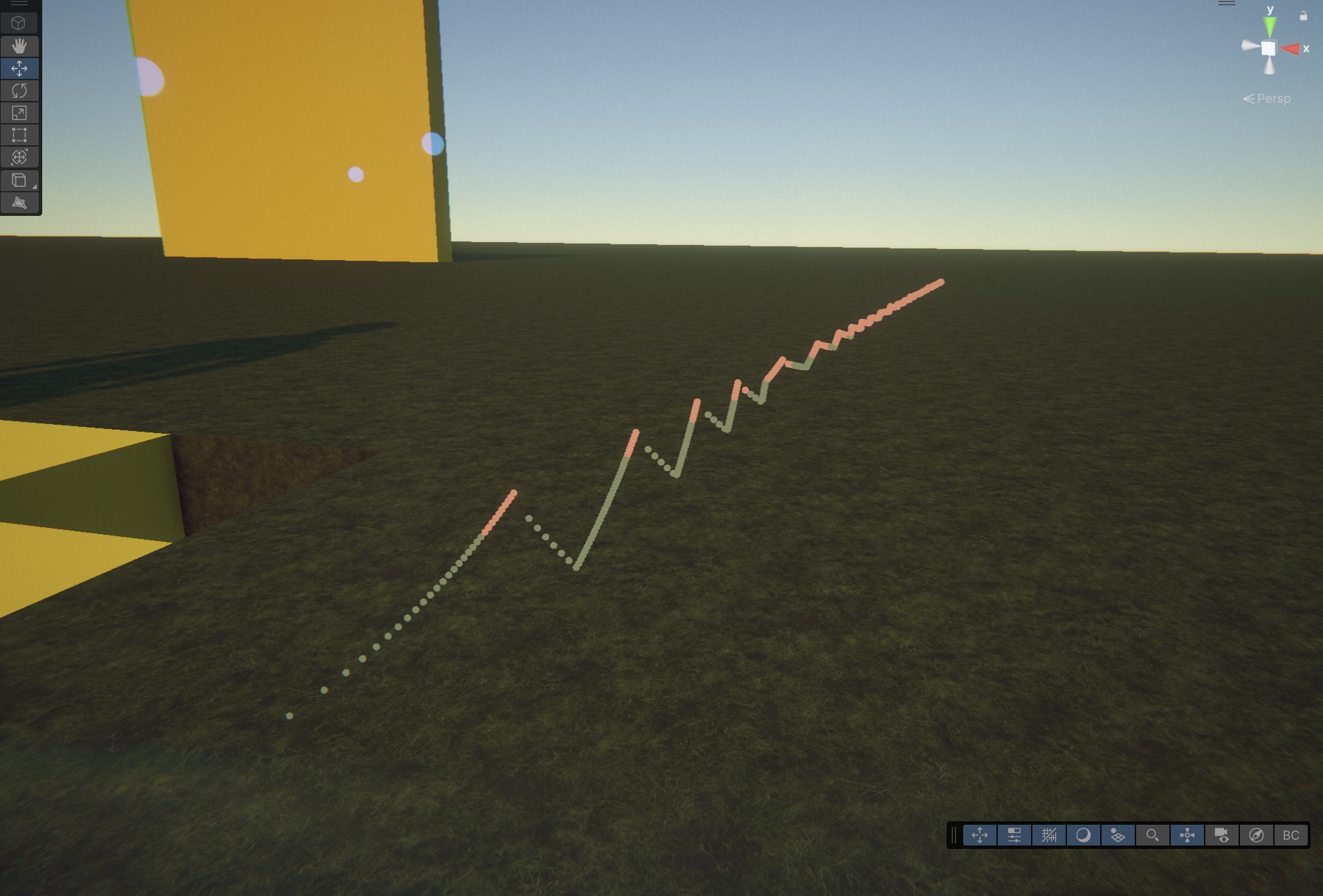

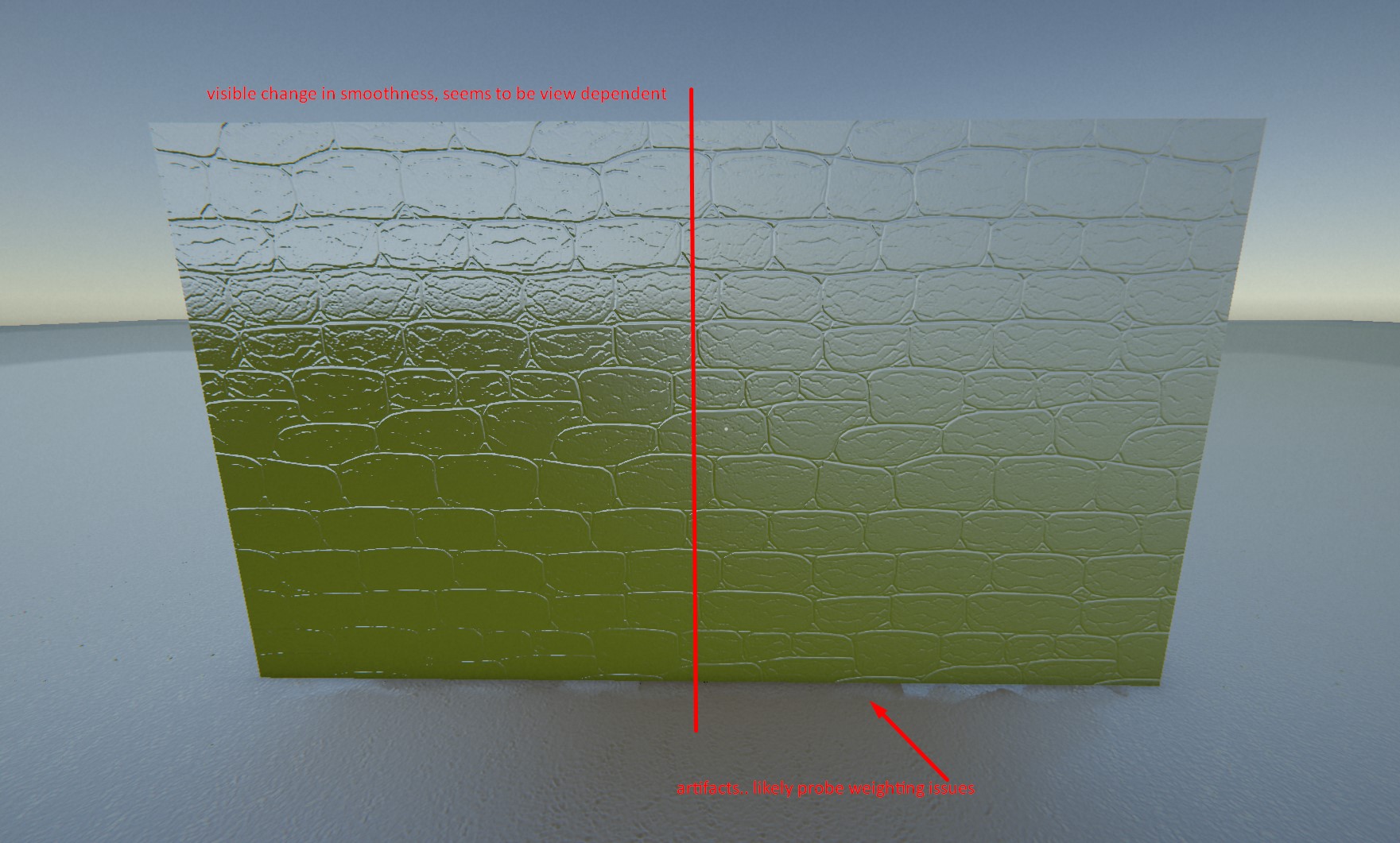

First attempt at indirect shadows. The Cheb occlusion and weighting is still work in progress, but first results seem good. Denser cascades are required, but everything is still running nicely at ~3.5ms on RTX3060

Also added a very fast 4-tap weighted cross blur using LDS that generates 7 mips for specular (~10us per level) + further optimizations.

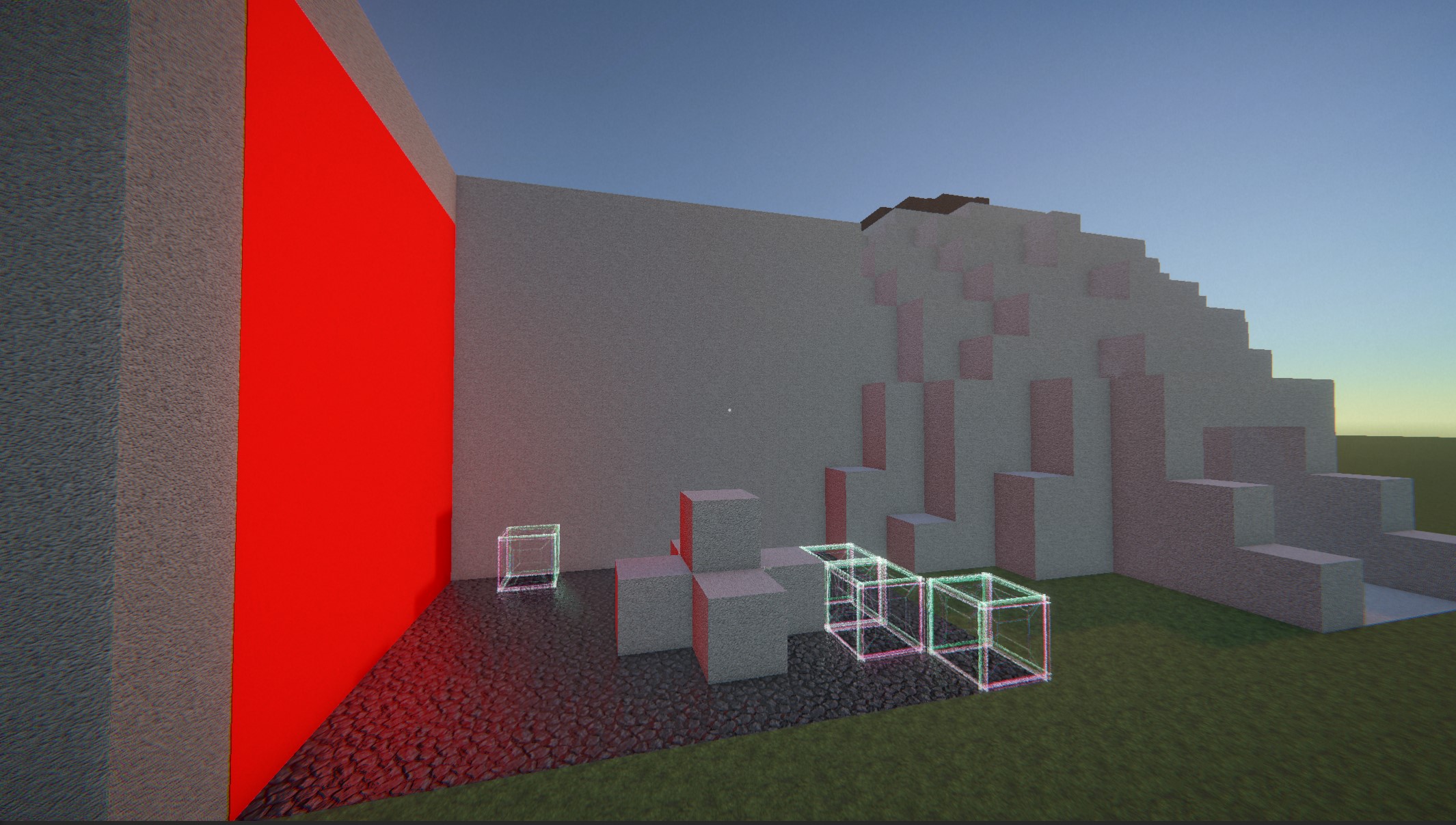

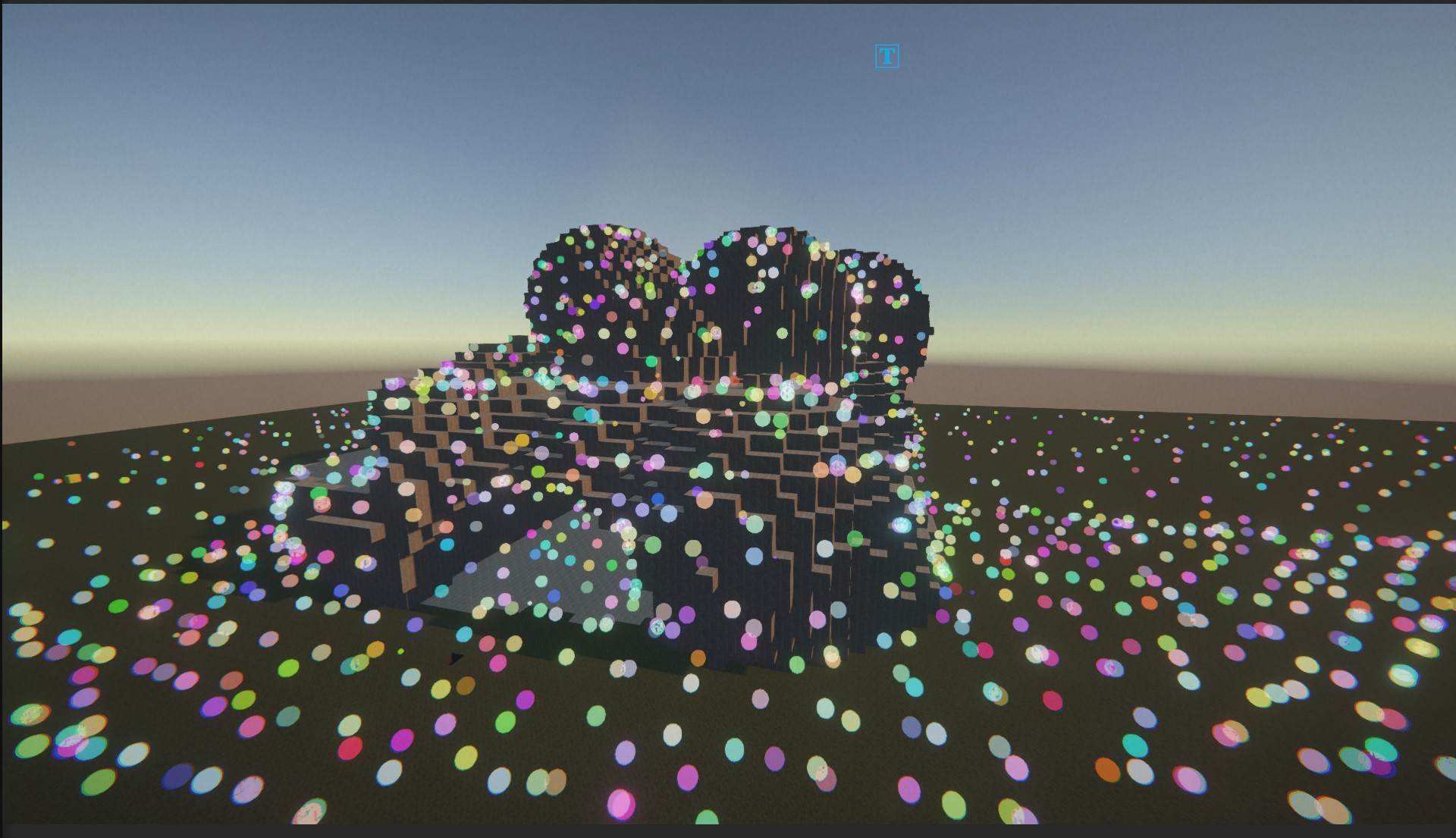

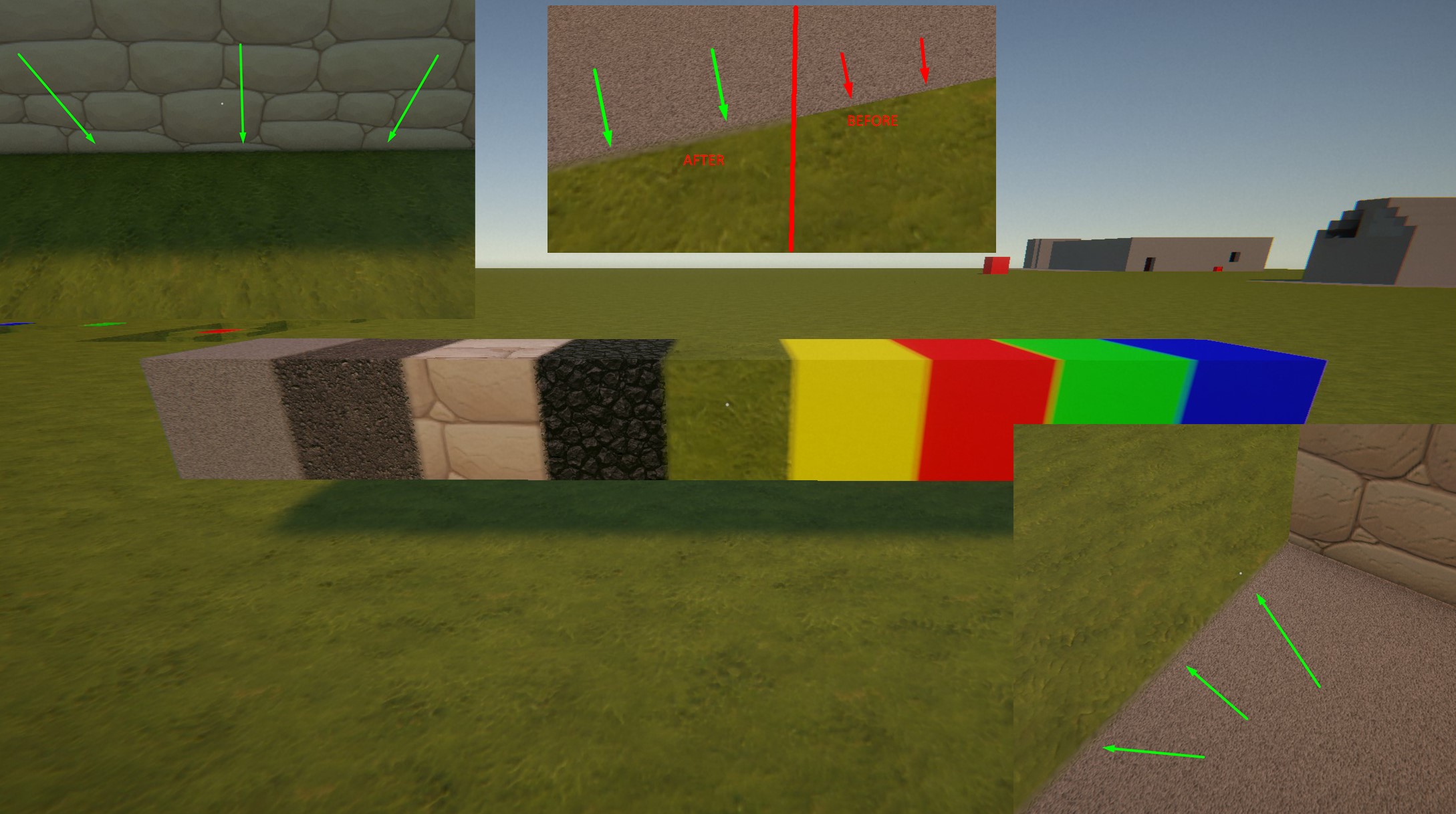

Added a tiny voxel material blend on voxel edges. Materials and structures now feel more grounded and dont look like they float.

To achieve this I had to implement dynamic materials created from config so texture packing becomes automatic. The upside is I now have a texture array for each texture type. The shader needs access to all textures in order to create a blend. It scans neighbors for each edge, leveraging voxel material 3D map and LUT already present in the GPU for GI material sampling this was quite easy to achieve.

Also another big upside is I now have material properties directly in the block asset definition, right next to other gameplay properties. This also enabled me to automatically render a UI icon for that given block on load.

Perf impact is a few microseconds. Downside is one small artefact I will get rid of later..

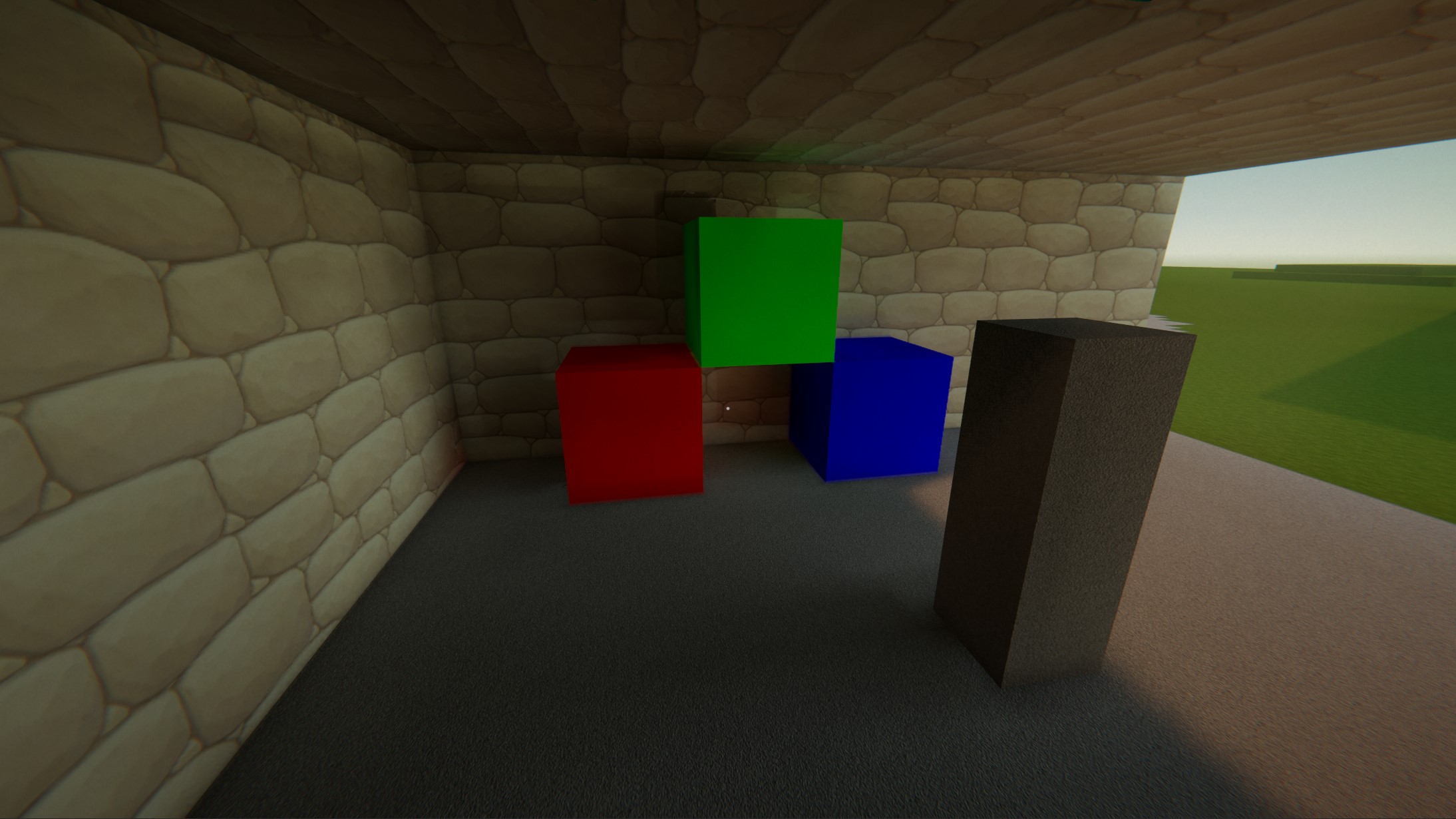

Specular fixed, it was caused by mip blur on radiance probes not wrapping around edges, so when the octa tiles were wrapped around a sphere, the corners created a sharp seam that presented itself as a reflection artifact. Prefiltering roughness mips now taps across edges when blurring.

You can see the walls reflecting ground, sky and color correctly now, with the correct specular response. (These are traced speculars, not SSR, SSR is blended on top and only for very smooth surfaces)

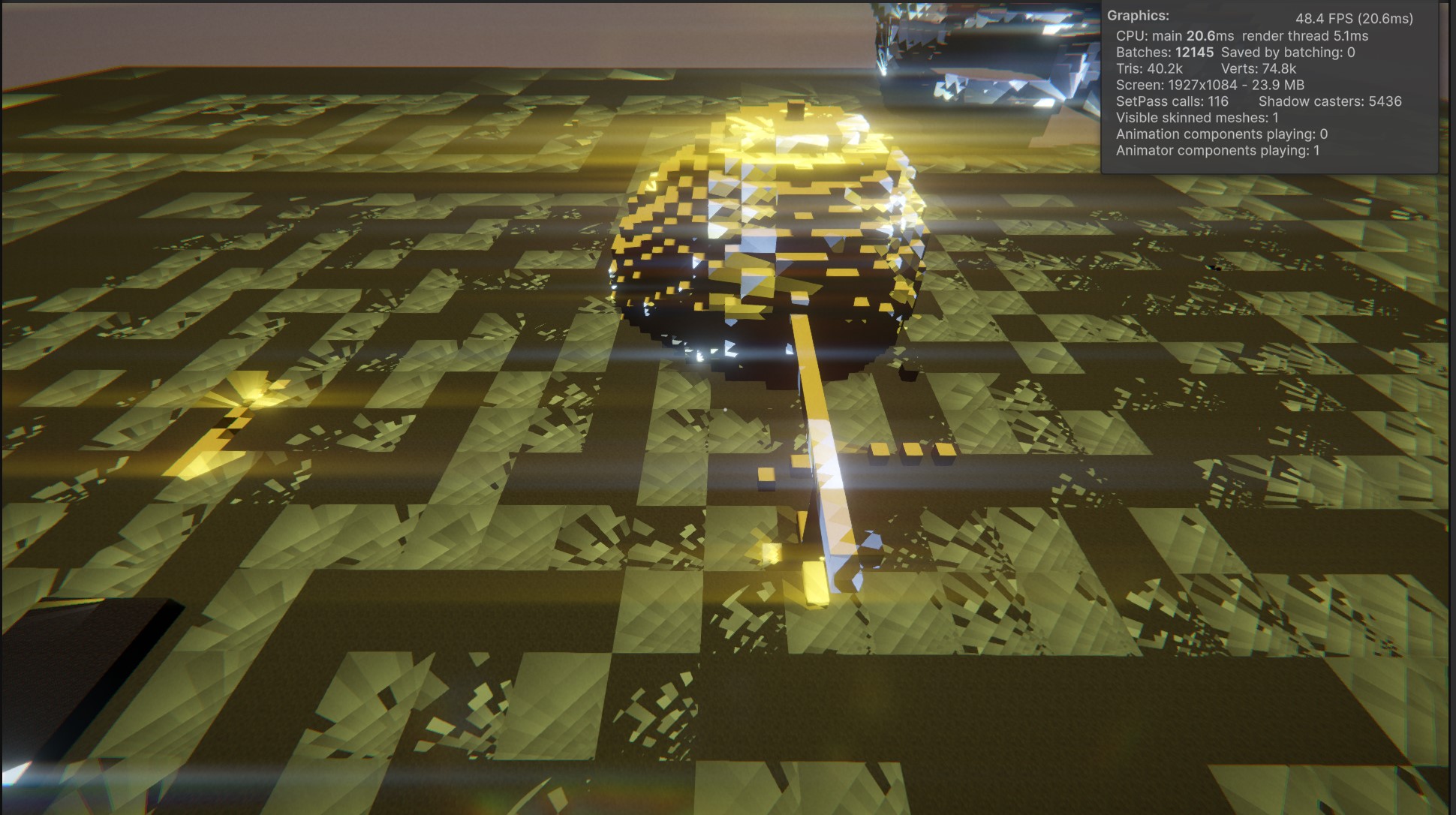

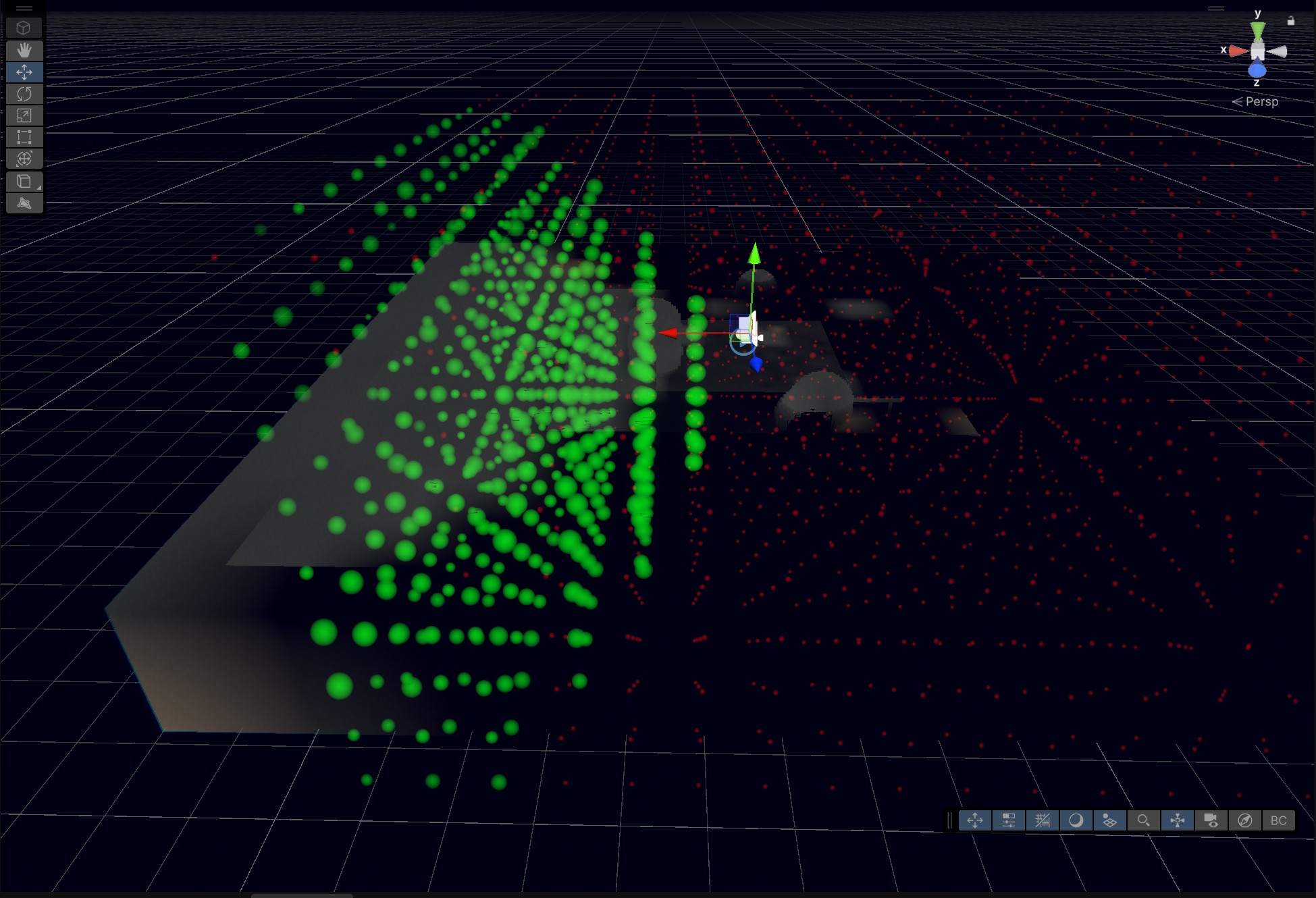

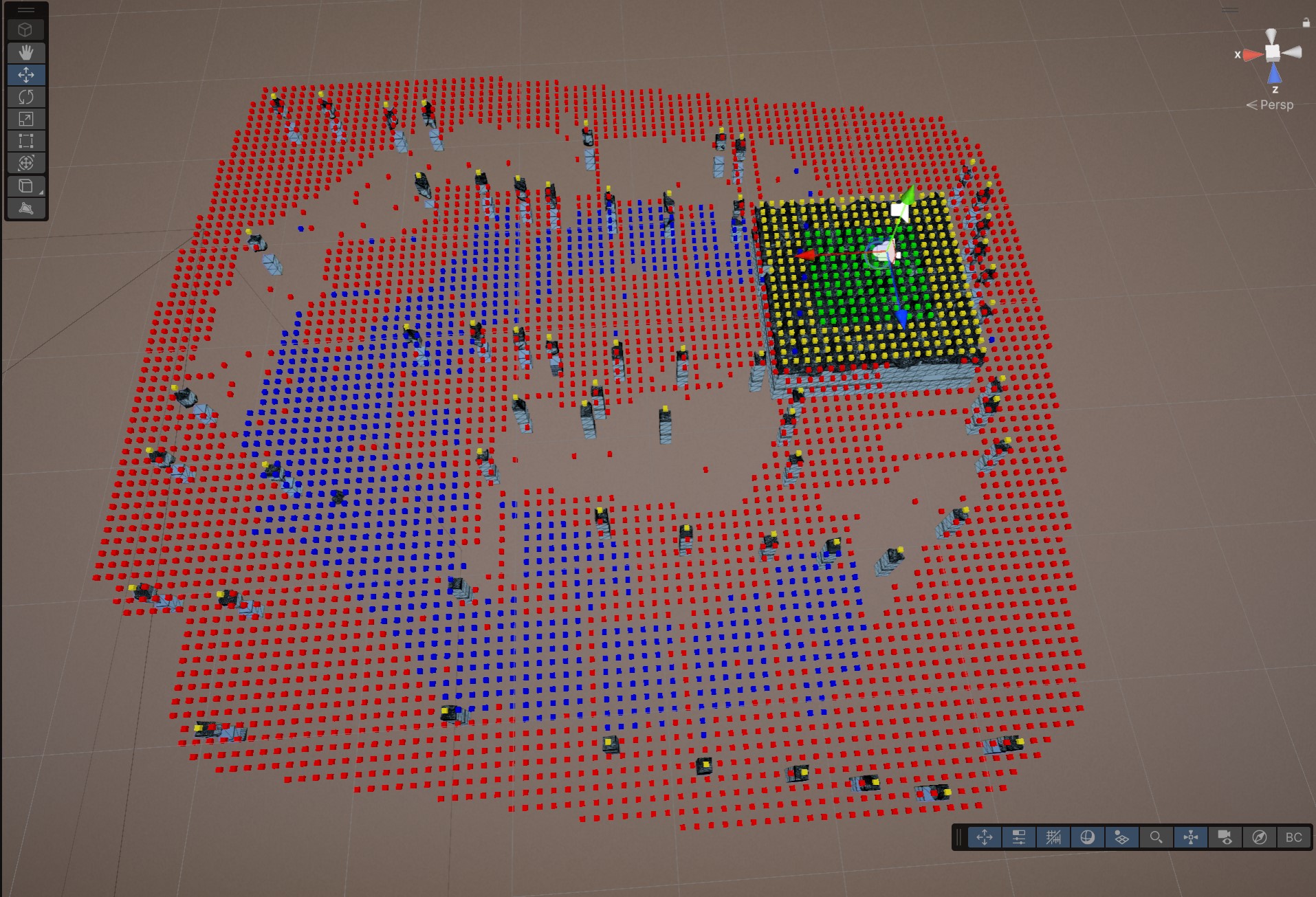

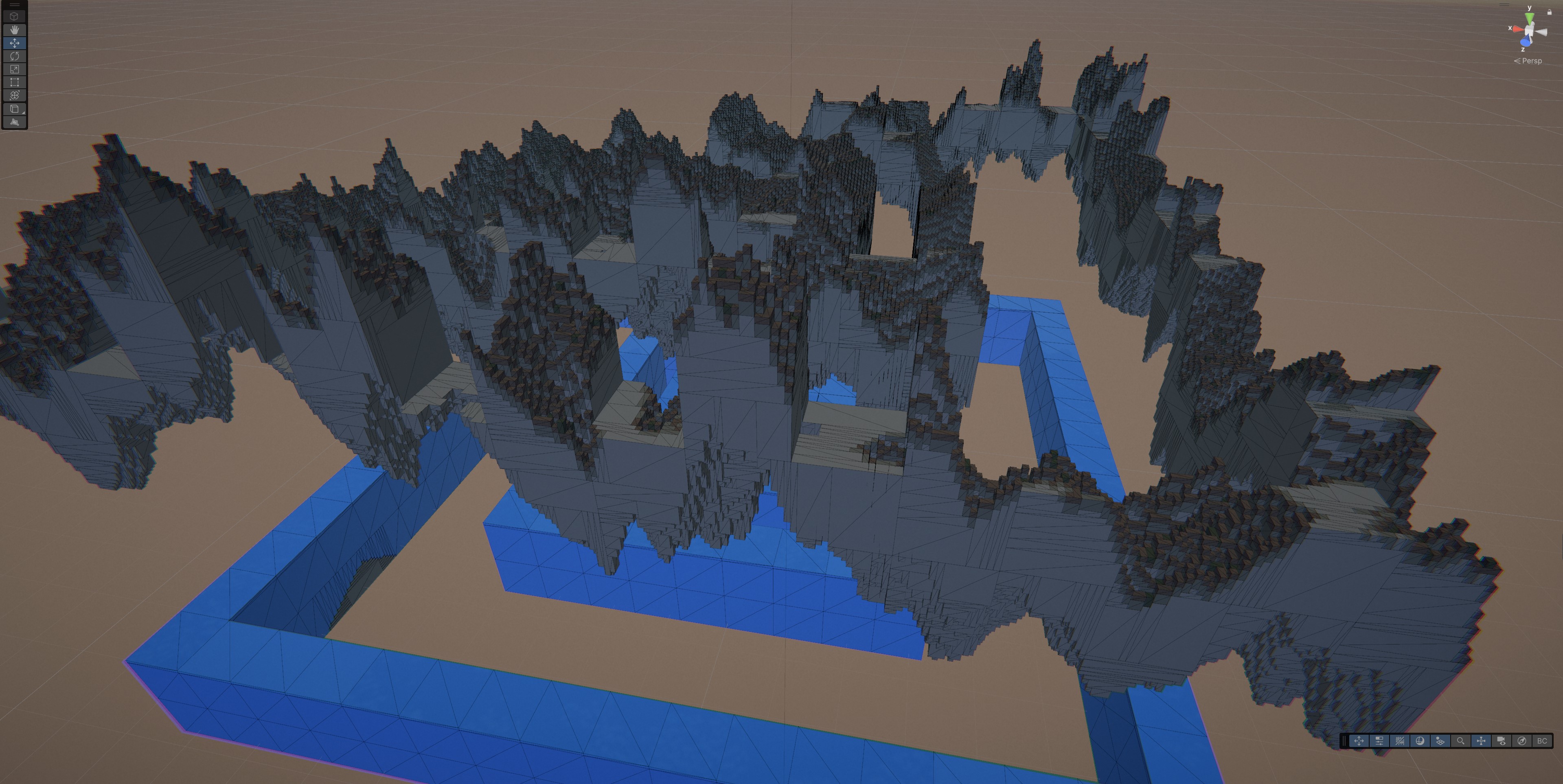

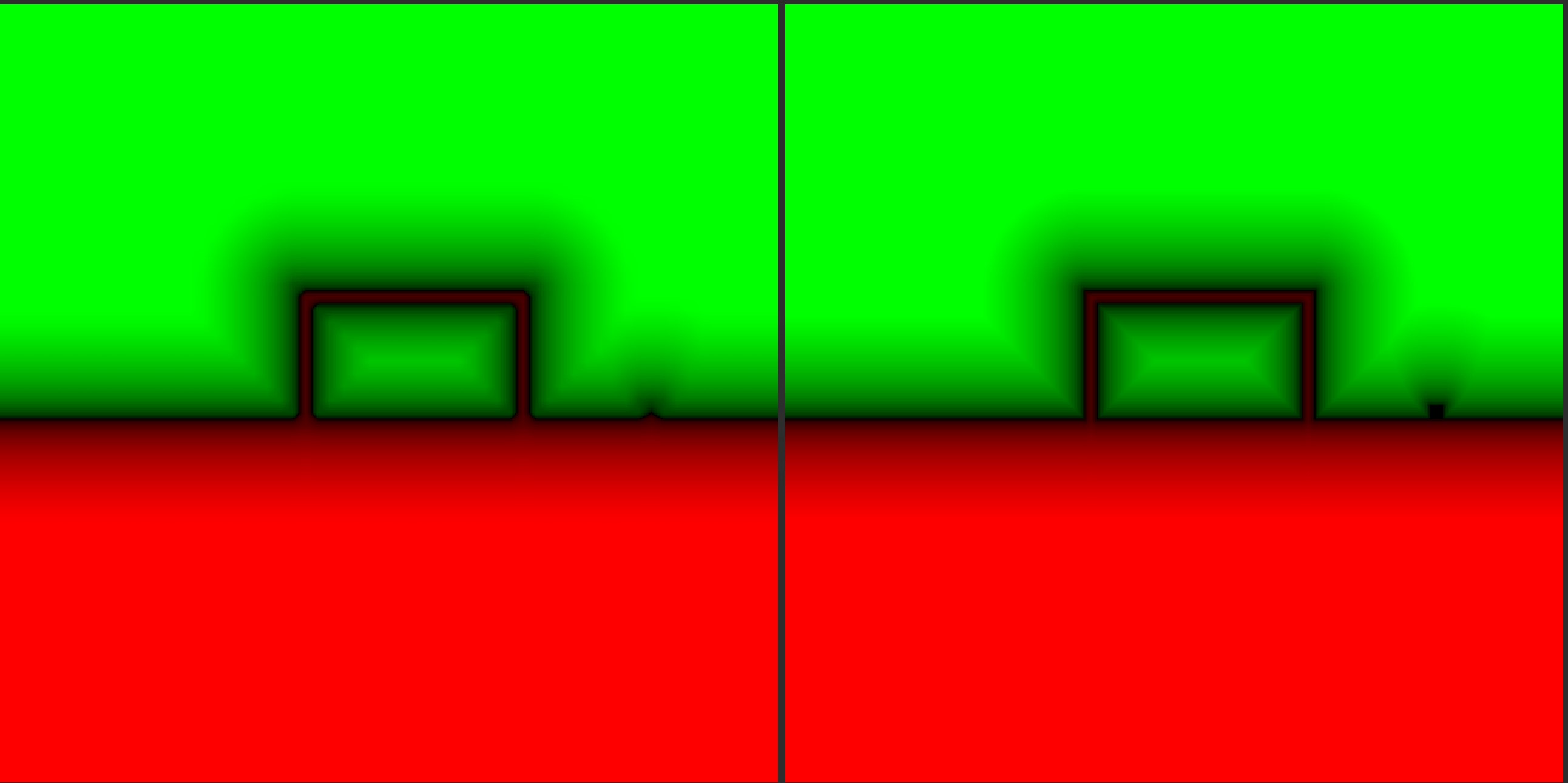

I may have found the optimal path using Chebyshev dilation accelerated polycube EDT for near-SDF and low-res far-field SDF for distant geometry. JFA was fast but it tended to overestimate.

The current approach builds a truncated, neighbor-aware signed distance field per chunk by treating solid voxels as cubes (polycube field), assembling a 34^3 halo, then using Cheb (box) dilations to classify most voxels and only doing an exact Euclidean cube-distance search in the boundary band. This gives usable, sign-correct distances that preserve 1 voxel thin features, while keeping bandwidth low and allowing incremental rebuilds via small per-chunk bricks. The main optimization tricks are a bitset halo + separable dilation + distance-sorted offset list, which makes most voxels early-out to +/- and minimizes expensive searches. The con is that it is still an approximate SDF due to truncation and grid sampling (still not a full EDT), so conservative ray stepping is required and far-field coverage needs a separate coarse SDF.

Using a SM5 optimized minimal bandwidth approach and shared memory kernel, the time for a 64chunk batch is well under 2ms!! Plus with an async queue it presents itself only as minor background noise in the profiler :)

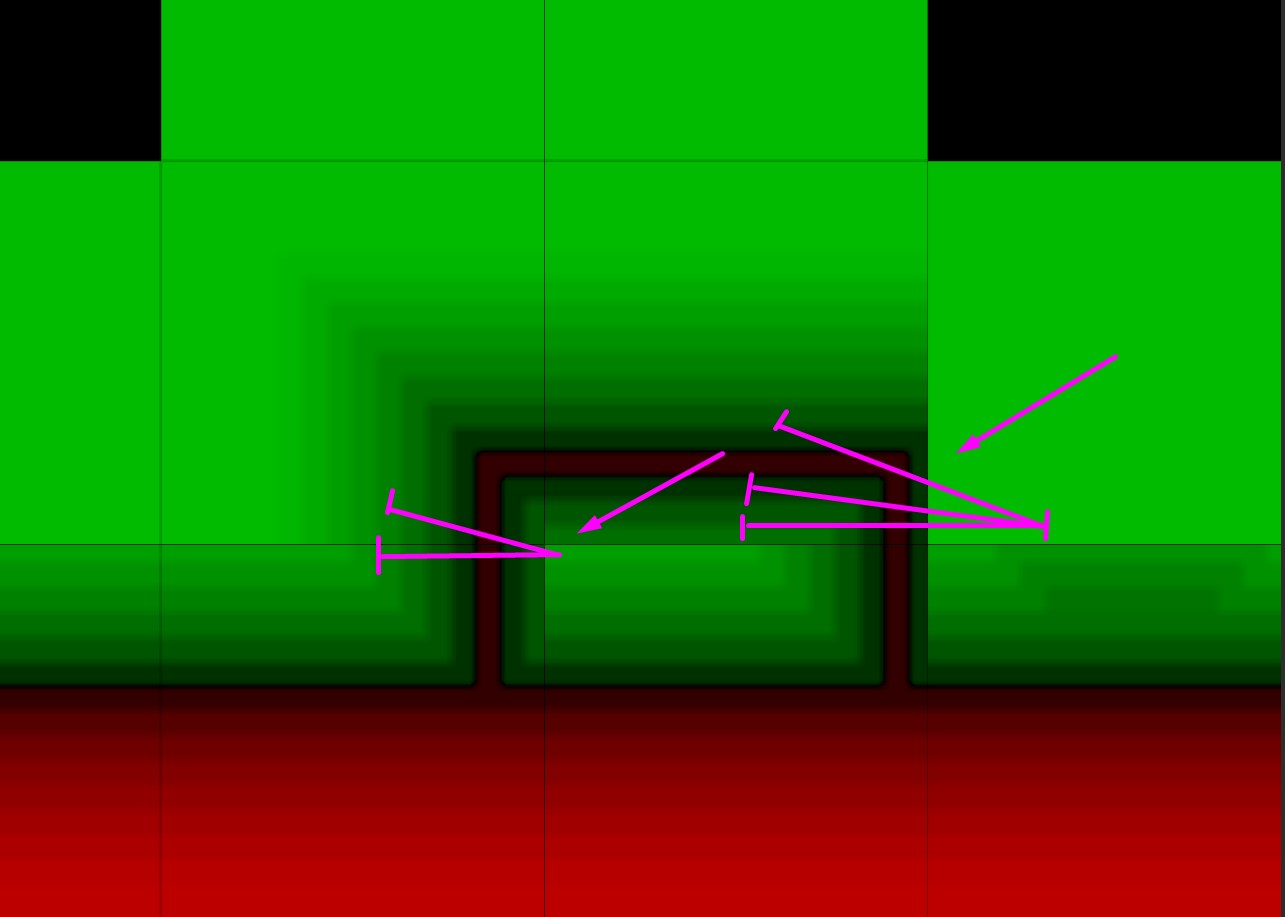

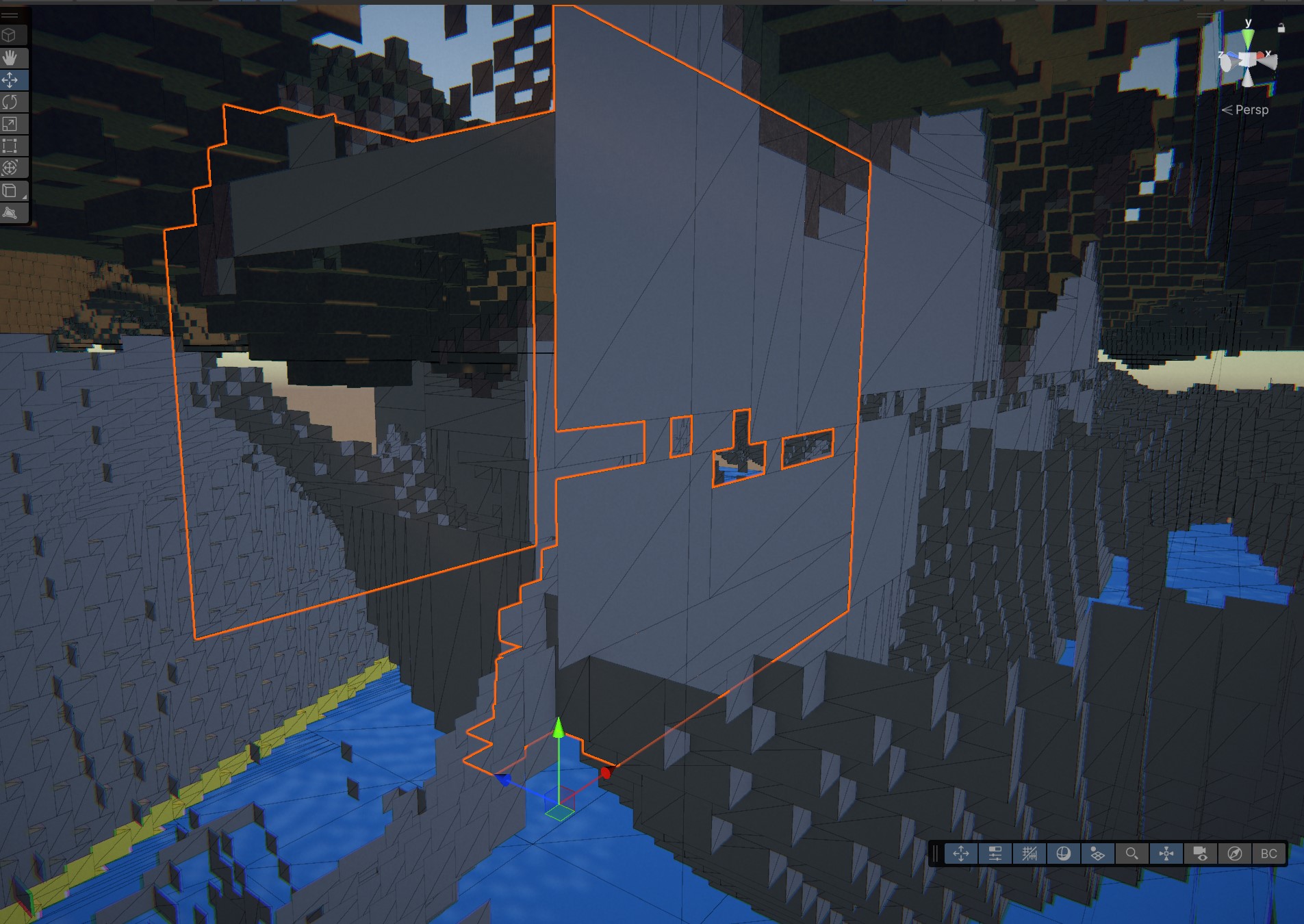

On the image, left side - real-time SDF, right side - brute-force offline calulated reference